When implementing new features it is always a good idea to test them – preferably with automated acceptance tests. Yet, there is a vast number of pitfalls to be avoided when doing so in order to put the testing effort to proper use. Just last week, I encountered a new one (at least for me): The impatient test.

The new feature was basically a long taking background operation which presents its result in a table on the GUI. If the operation succeeded, date and time are displayed in the accordant cell of the table, if not, the cell is left untouched (e.g. left empty if there was no successful run yet, or, if there was, the date of the last successful run is shown).

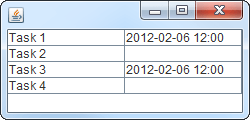

During the test, four tasks were to be completed by the background operation, two of which were supposed to succeed and the others to fail. So, the expected result was something like:

Having obeyed the “fail-first” guideline and having seen the test pass later on, I was quite sure the test and the feature worked as intended. Yet, manual testing proved otherwise. With the exact same scenario, task 4 always succeeded.

In fact, there was a bug that caused task 4 to result in a false-positive. But why did the automated test not uncover this flaw? Let’s recall what the test does:

- Prepare the test environment and the application

- Start the background operation comprised of tasks 1-4

- Wait

- Evaluate/assert the results

- Clean up

Some investigation unveiled that the problem was caused by the fact that the test just did not wait long enough for the background operation to finish properly, e.g. the results were evaluated before task 4 was finished. Thus, the false-positive occurred just after the test checked whether it was there:

After having spotted the source of the problem, several possible solutions ranging from “wait longer in the test” to “explicitly display unfinished runs in the application” came to mind. The most elegant and practical of which is to have the test waiting for the background operation to finish instead of just waiting a given period. Even though this required some more infrastructure.

Though acceptance testing is a great tool for developing software, this experience reminded me that there is also the possibility of flawed, or not completely correct, tests luring you into a false sense of security if you pay too few attention. Manual testing in addition to automated testing may help to avoid these pitfalls.

I appreciated the project http://code.google.com/p/awaitility/ in such cases. It polls until specified condition (expressed in nice fluent API) is fulfilled. Synchronizing test with code via java.util.concurrent Lock and Condition constructs would may be cleaner but this is simple, quick and noninvasive.

Hi Tomáš,

Thanks for the hint. I will definitely have a look at the project.

As far as I can tell from a brief look at awaitility, it is pretty similar to the solution we came up with, yet our solution is much more tailored to the specific problem.