This blog post presents a particular programming technique that I happen to use more often in recent months. It doesn’t state that this technique is superior or more feasible than others. It’s just a story about a different solution to an old programming problem.

Let’s program a class hierarchy for animals, in particular for mammals and birds. You probably know where this leads up to, but let’s start with a common solution.

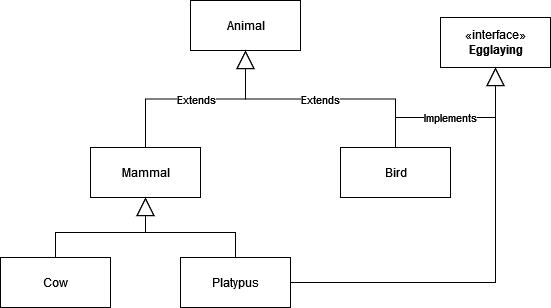

Both mammals and birds behave like animals, so they are subclasses of it. Birds have the additional behaviour of laying eggs for reproduction. We indicate this feature by implementing the Egglaying interface.

Mammals feed their offsprings by giving them milk. There are two mammals in our system, a cow and the platypus. The cow behaves like the typical mammal and gives a lot of milk. The platypus also feeds their young with milk, but only after they hatched from their egg. Yes, the platypus is a rare exception in that it is both a mammal and egglaying. We indicate this odd fact by implementing the Egglaying interface, too.

If our code wants to access the additional methods of the Egglaying interface, it has to check if the given object implements it and then upcasts it. I call this type of cast “wildcast” because they seem to appear out of nowhere when reading the code and seemingly don’t lead up or down the typical type hierarchy. Why would a mammal lay eggs?

One of my approaches that I happen to use more often recently is to indicate the existence of real wildcast with a Optional return type. In theory, you can wildcast from anywhere to anyplace you want. But only some of these jumps have a purpose in the domain. And an explicit casting method is a good way to highlight this purpose:

public abstract class Mammal {

public Optional<Egglaying> asEgglaying() {

return Optional.empty();

}

}The “asEgglaying()” method might return an Egglaying object, or it might not. As you can see, on default, it returns only an empty Optional. This means that no cow, horse, cat or dog has to think about laying eggs, they just aren’t into it by default.

public class Platypus extends Mammal implements Egglaying {

@Override

public Optional<Egglaying> asEgglaying() {

return Optional.of(this);

}

}The platypus is another story. It is the exception to the rule and knows it. The code “Optional.of(this)” is typical for this coding technique.

A client that iterates over a collection of mammals can now incorporate the special case with more grace:

for (Mammal each : List.of(mammals())) {

each.lactate();

each.asEgglaying().ifPresent(Egglaying::breed);

}Compare this code with a more classic approach using a wildcast:

for (Mammal each : List.of(mammals())) {

each.lactate();

if (each instanceof Egglaying) {

((Egglaying) each).breed();

}

}My biggest grief with the classic approach is that the instanceof is necessary for the functionality, but not guided by the domain model. It comes as a surprise and has no connection to the Mammal type. In the Optional wildcast version, you can look up the callers of “asEgglaying()” and see all the special code that is written for the small number of mammals that lay eggs. In the classic approach, you need to search for conditional upcasts or separate between code for birds and special mammal code when looking up the callers.

In my real-world projects, this “optional wildcast” style facilitates domain discovery by code completion and seems to lead me to more segregated type systems. These impressions are personal and probably biased, so I would like to hear from your experiences or at least opinions in the comments.