I have lectured university students on software engineering for 25 years now. There are some things that changed over time, some for the better, some for worse. But one aspect worries me: The rise of buffet-style knowledge.

Let me explain what I mean by that term: In one of his books, the legendary physicist Richard Feynman describes a group of highly educated students that could recite every law of physics and all the details of materials, but were unable to act on this knowledge by combining some facts to come up with a solution to a common real-world problem. They ingested all the data, but didn’t digest it. It never amalgamated into a box of mental tools that could be applied to a problem just by thought experiment.

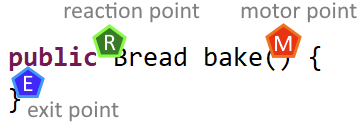

I recognize this pattern in my students, too. One example was working with a protocol that sends characters over a (physical!) wire. Each command was prefixed with an exclamation mark, followed by the mnemonic (an odd word, meaning a garbled mess of characters without innate meaning) and then the line ending. A typical specification for a command looked like this:

! QUIT <CR> <LF>

We approached the implementation by writing tests first, and sure enough, half the students asserted for the existence of a literal “<CR><LF>” at the end of the line. Not the two characters “Carriage Return” and “Line Feed”, but the eight characters as seen. When I asked them if they know about character encodings and the ASCII code, they felt well versed in both topics.

After we combined their tests with the real client implementation, they saw the failed assertions, but couldn’t see their mistake. The real client was lacking the latter half of the command line in their mind. They were amazed when they discovered that there are characters that you just cannot see right away.

They studied all the characters that they saw and just assumed that was all there is. The simple question “how does a text editor know when a line of text is over?” perplexed them. They just never stopped to think about how this thing actually works.

My theory about the origin of this symptom is double tracked: Richard Feynman argued that the type of knowledge tests that the students have to endure is the root cause. My sample size is rather small, but I can see that being a big influence. If the tests ask for connections between different pools of knowledge, the students are forced to link their knowledge. Those students that are unable to digest the knowledge until it becomes a mental tool instead of just a reproducible fact tend to perish. If a test just asks for the reproduction of one topic, the digestion part of learning is an optional bonus on top of the study requirements.

Returning to our example above: If I ask for the reproduction of unit tests and another question about character encodings, both questions can be answered without knowledge about control characters (not visible, but still present).

If I combine both questions and ask for a correct assertion about the length of the quit command (7 characters), I can test who is able to write unit tests and who doesn’t know about control characters and asserts for 13 characters. This type of questions (that requires knowledge transfer or fusion from several topics at once) is actively discouraged in today’s exams.

But the second track of my theory is about the means of modern knowledge consumption. We don’t eat full knowledge meals anymore, we pick the flashy bits and skip the rest. If we could learn by just listening to music, we would skip three songs, fast forward the fourth to the exciting part and then ignore the rest of the album. Compare that to the days of linear music storage, you were heavily nudged to listen to the whole album front to back. And while listening to the “other” songs, two things could happen that are missing from the picky approach: We had time to appreciate the exiting part even more and we could be surprised by a song that might be even better than the one we anticipated. Our music portfolio was not only curated by us, but by the artist, too.

Transfer this to software engineering and my grief can be retyped into: Nobody reads whole books about a software topic anymore. In fact, I had several students acting aghast when I suggested they should read a book in order, front to back. To them, that was like wasting time with filler material. The thought that this “filler” might be a source of surprise, inspiration and additional curiosity never crossed their mind before.

I get the comfort of quick answers from stack overflow, youtube videos or a chatbot AI. I see the instant gratification nature of going on a highlight-driven journey through nearly all topics of modern programming. But we aren’t creatures that thrive and prosper on instant gratification. We don’t learn from quick success. We learn by trial and repetition. And we can’t cheat our biological heritage (at least not yet).

So, what is my point? I think that “broad knowledge”, the ability to combine different aspects in thought experiments and slow, creative learning will be more important in the future, especially with the availability of a talking encyclopedia right in front of us that can fill the minor gaps faster than we can articulate the question. But we need to know what to ask, and even more important – why we ask.