If you couldn’t attend the Schneide Dev Brunch at 9th of August 2015, here is a summary of the main topics.

Two weeks ago, we held another Schneide Dev Brunch, a regular brunch on the second sunday of every other (even) month, only that all attendees want to talk about software development and various other topics. So if you bring a software-related topic along with your food, everyone has something to share. The brunch was well attented this time with enough stuff to talk about. As usual, a lot of topics and chatter were exchanged. This recapitulation tries to highlight the main topics of the brunch, but cannot reiterate everything that was spoken. If you were there, you probably find this list inconclusive:

Two weeks ago, we held another Schneide Dev Brunch, a regular brunch on the second sunday of every other (even) month, only that all attendees want to talk about software development and various other topics. So if you bring a software-related topic along with your food, everyone has something to share. The brunch was well attented this time with enough stuff to talk about. As usual, a lot of topics and chatter were exchanged. This recapitulation tries to highlight the main topics of the brunch, but cannot reiterate everything that was spoken. If you were there, you probably find this list inconclusive:

News on Docker

Docker is the hottest topic among developers and operators in 2015. No wonder we started chatting about it the minute we sat down. There are currently two interesting platform projects that provide runtime services for docker: Tutum (commercial) and Rancher (open source). We all noted the names and will check them out. The next interesting fact was that Docker is programmed in the Go language. The team probably one day decided to give it a go.

Air Conditioning

We all experienced the hot spell this summer and observed that work in the traditional sense is impossible beyond 30° Celsius. Why there are still so few air conditioned offices in our region is beyond our grasp. Especially since it’s possible to power the air condition system with green electricity and let sun-power deal with the problem that, well, the sun brought us. In 2015 alone, there are at minimum two work weeks lost to the heat. The productivity gain from cooling should outweigh the costs.

License Management

We talked about how different organisations deal with the challenge of software license management. Nearly every big company has a tool that does essentially the same license management but has its own cool name. Other than that, bad license management is such a great productivity killer that even air conditioning wouldn’t offset it.

Windows 10

Even if we are largely operation system agnostic, the release of Windows 10 is hot news. A few of our participants already tried it and concluded that “it’s another Windows”. A rather confusing aspect is the split system settings. And you have to abdicate the Cortana assistant if you want to avoid the data gathering.

Patch Management

A rather depressing topic was the discussion about security patches. I just repeat two highlights: A substantial number of servers on the internet are still vulnerable to the heartbleed attack. And if a car manufacturer starts a big recall campaign with cost-free replacements, less than 10 percent of the entitled cars are actually fixed on average. These explicitely includes safety-critical issues. That shouldn’t excuse us as an industry for our own shortcomings and it’s not reassuring to see that other industries face the same problems.

Self-Driving Cars

We disgressed on the future hype topic of self-driving cars. I can’t reiterate the complete discussion, but we agreed that those cars will hit the streets within the next ten years. The first use case will be freight transports, because the cargo doesn’t mind if the driver is absent and efficiency matters a lot in logistics. Plus, machines don’t need breaks. Ok, those were enough puns on the topic. Sorry.

Tests on Interfaces

An interesting question was how to build tests that can ensure a class or interface contract. Much like regression tests for recently broken functionality, compatibility tests should deal with backward compatibility issues in the interface. Turns out, the Eclipse foundation gave the topic some thoughts and came up with an exhaustive list of aspects to check. There are even some tools that might come in handy if you want to compare two versions of an API.

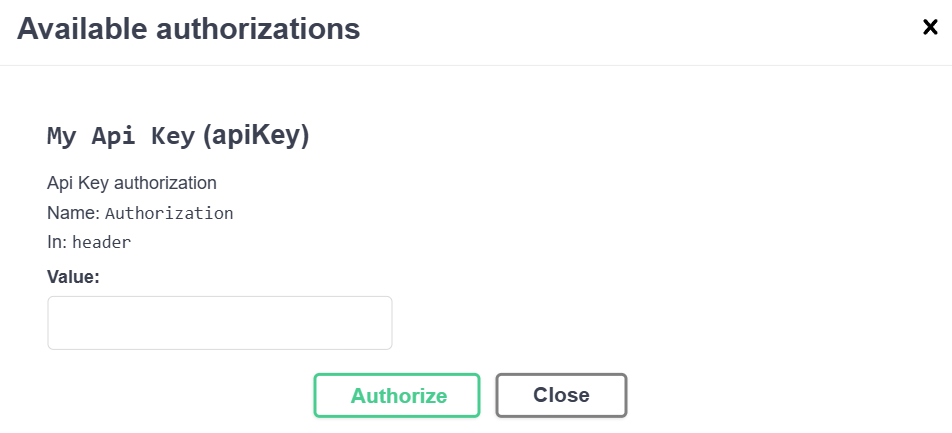

API Design

When the topic of API Design came up, some veterans of the Schneide Events immediately mentioned the API Design Fest we held in November 2013 to get our noses bloody on API design. Well, bleed we did. The most important take-away from the Fest was that if you plan to publish an API that can endure some years in production while being enhanced and improved, you just shouldn’t do it. Really, don’t do it, it’s probably a bad idea and you lack the required skill without even knowing it. If you want to know, participate or even host an API Design Fest.

And if you happen to design a web-based API, you might abandon backward compatibility by offering several distinct “versions” of APIs of a service. The version is included in the API URL, and acts more like a name than a version. This will ease your burden a bit. A nice reference resource might also be the PayPal API style guide.

Let’s just agree that API design is really hard and should not be done until it’s clear you don’t suffer from Dunning-Kruger effect symptoms too much.

Performance Tests

We talked about the most effective setup of performance tests. There were a lot of ideas and we cornerstoned the topic around this:

- There was a nearly heroic effort from the Eclipse development team to measure their IDE performance, especially to compare different versions of the IDE. The Eclipse Test & Performance Tools Platform (TPTP) was (as in: discontinued) a toolkit of interesting approaches to the topic. The IDE itself was measured by performance fingerprints like this example from 2011. As far as we know, all those things ceased to exist.

- At the last Java Forum Stuttgart, there was a talk about performance testing from an experienced tester that loved to give specific advice. The slides can be viewed online in german language (well, not really, but the talk was).

- The book Release It! has a lot of insights to this topic. It’s one of the bigger books on the pragmatic bookshelf.

- The engineers at NetFlix actually did a lot of thinking about the topic. They came up with Hystrix, a resilience library, aimed to make it easier to prevent complete system blackouts. They also came up with Chaos Monkey, a service that makes it easier to have a complete system blackout. If we can say anything about NetFlix, it is that they definitely approach their problems from the right angle.

Company Culture

Leaking over from the previous topic about effective performance-related measures, we talked about different company cultures, especially in regard to a centralized human resources departments and works council (german: Betriebsrat). We agreed that it is very difficult to maintain a certain culture and continued growth. We also agreed that culture trickles down from top management.

OpenGL

The last topic on this Dev Brunch was about the rendering of text or single characters in OpenGL. By using signed distance fields, you can render text more crisp and still only use cheap computation instructions. There is a paper from Valve on the topic that highlights the benefits and gives a list of additional reading. It’s always cool to learn about something simple that actually improves things.

Epilogue

As usual, the Dev Brunch contained a lot more chatter and talk than listed here. The number of attendees makes for an unique experience every time. We are looking forward to the next Dev Brunch at the Softwareschneiderei. And as always, we are open for guests and future regulars. Just drop us a notice and we’ll invite you over next time.