The concept of Test First (“TF”, write a failing test first and make it green by writing exactly enough production code to do so) was always very appealing to me. But I’ve never experienced the guiding effect that is described for Test Driven Development (“TDD”, apply a Test First approach to a problem using baby steps, letting the tests drive the production code). This lead to quite some frustration and scepticism on my side. After a lot of attempts and training sessions with experienced TDD practioners, I concluded that while I grasped Test First and could apply it to everyday tasks, I wouldn’t be able to incorporate TDD into my process toolbox. My biggest grievance was that I couldn’t even tell why TDD failed for me.

The bad news is that TDD still lies outside my normal toolbox. The good news is that I can pinpoint a specific area where I need training in order to learn TDD properly. This blog post is the story about my revelation. I hope that you can gather some ideas for your own progress, implied that you’re no TDD master, too.

A simple training session

In order to learn TDD, I always look for fitting problems to apply it to. While developing a repository difference tracker, the Diffibrillator, there was a neat little task to order the entries of several lists of commits into a single, chronologically ordered list. I delayed the implementation of the needed algorithm for a TDD session in a relaxed environment. My mind began to spawn background processes about possible solutions. When I finally had a chance to start my session, one solution had already crystallized in my imagination:

An elegant solution

Given several input lists of commits, now used as queues, and one result list that is initially empty, repeat the following step until no more commits are pending in any input queue: Compare the head commits of all input queues by their commit date and remove the oldest one, adding it to the result list.

I nick-named this approach the “PEZ algorithm” because each commit list acts like the old PEZ candy dispensers of my childhood, always giving out the topmost sherbet shred when asked for.

A Test First approach

Trying to break the problem down into baby-stepped unit tests, I fell into the “one-two-everything”-trap once again. See for yourself what tests I wrote:

@Test

public void emptyIteratorWhenNoBranchesGiven() throws Exception {

Iterable<ProjectBranch> noBranches = new EmptyIterable<>();

Iterable<Commit> commits = CombineCommits.from(noBranches).byCommitDate();

assertThat(commits, is(emptyIterable()));

}

The first test only prepares the classes’ interface, naming the methods and trying to establish a fluent coding style.

@Test

public void commitsOfBranchIfOnlyOneGiven() throws Exception {

final Commit firstCommit = commitAt(10L);

final Commit secondCommit = commitAt(20L);

final ProjectBranch branch = branchWith(secondCommit, firstCommit);

Iterable<Commit> commits = CombineCommits.from(branch).byCommitDate();

assertThat(commits, contains(secondCommit, firstCommit));

}

The second test was the inevitable “simple and dumb” starting point for a journey led by the tests (hopefully). It didn’t lead to any meaningful production code. Obviously, a bigger test scenario was needed:

@Test

public void commitsOfSeveralBranchesInChronologicalOrder() throws Exception {

final Commit commitA_1 = commitAt(10L);

final Commit commitB_2 = commitAt(20L);

final Commit commitA_3 = commitAt(30L);

final Commit commitA_4 = commitAt(40L);

final Commit commitB_5 = commitAt(50L);

final Commit commitA_6 = commitAt(60L);

final ProjectBranch branchA = branchWith(commitA_6, commitA_4, commitA_3, commitA_1);

final ProjectBranch branchB = branchWith(commitB_5, commitB_2);

Iterable<Commit> commits = CombineCommits.from(branchA, branchB).byCommitDate();

assertThat(commits, contains(commitA_6, commitB_5, commitA_4, commitA_3, commitB_2, commitA_1));

}

Now we are talking! If you give the CombineCommits class two branches with intertwined commit dates, the result will be a chronologically ordered collection. The only problem with this test? It needed the complete 100 lines of algorithm code to be green again. There it is: the “one-two-everything”-trap. The first two tests are merely finger exercises that don’t assert very much. Usually the third test is the last one to be written for a long time, because it requires a lot of work on the production side of code. After this test, the implementation is mostly completed, with 130 lines of production code and a line coverage of nearly 98%. There wasn’t much guidance from the tests, it was more of a “holding back until a test allows for the whole thing to be written”. Emotionally, the tests only hindered me from jotting down the algorithm I already envisioned and when I finally got permission to “show off”, I dived into the production code and only returned when the whole thing was finished. A lot of ego filled in the lines, but I didn’t realize it right away.

But wait, there is a detail left out from the test above that needs to be explicitely specified: If two commmits happen at the same time, there should be a defined behaviour for the combiner. I declare that the order of the input queues is used as a secondary ordering criterium:

@Test

public void decidesForFirstBranchIfCommitsAtSameDate() throws Exception {

final Commit commitA_1 = commitAt(10L);

final Commit commitB_2 = commitAt(10L);

final Commit commitA_3 = commitAt(20L);

final ProjectBranch branchA = branchWith(commitA_3, commitA_1);

final ProjectBranch branchB = branchWith(commitB_2);

Iterable<Commit> commits = CombineCommits.from(branchA, branchB).byCommitDate();

assertThat(commits, contains(commitA_3, commitA_1, commitB_2));

}

This test didn’t improve the line coverage and was green right from the start, because the implementation already acted as required. There was no guidance in this test, only assurance.

And that was my session: The four unit tests cover the anticipated algorithm completely, but didn’t provide any guidance that I could grasp. I was very disappointed, because the “one-two-everything”-trap is a well-known anti-pattern for my TDD experiences and I still fell right into it.

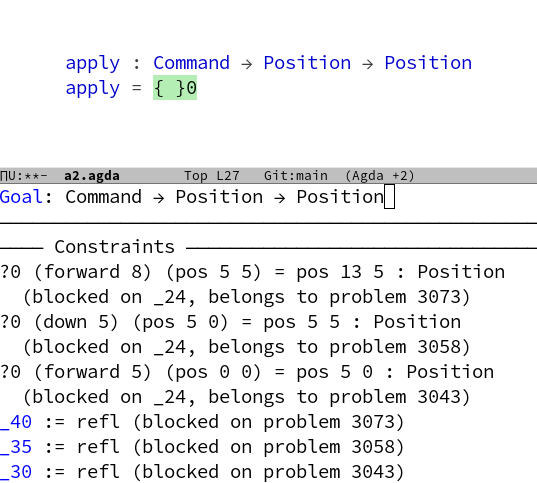

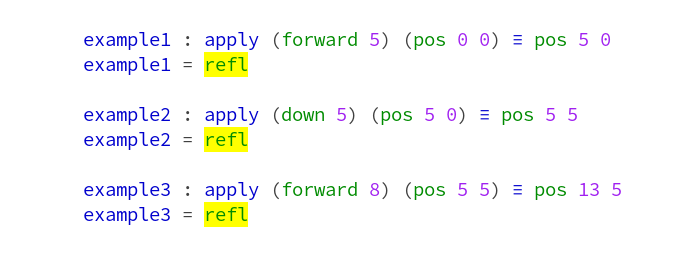

A second approach using TDD

I decided to remove my code again and pair with my co-worker Jens, who formulated a theory about finding the next test by only changing one facet of the problem for each new test. Sounds interesting? It is! Let’s see where it got us:

@Test

public void noBranchesResultsInEmptyTrail() throws Exception {

CommitCombiner combiner = new CommitCombiner();

Iterable<Commit> trail = combiner.getTrail();

assertThat(trail, is(emptyIterable()));

}

The first test starts as no big surprise, it only sets “the mood”. Notice how we decided to keep the CommitCombiner class simple and plain in its interface as long as the tests don’t get cumbersome.

@Test

public void emptyBranchesResultInEmptyTrail() throws Exception {

ProjectBranch branchA = branchFor();

CommitCombiner combiner = new CommitCombiner(branchA);

assertThat(combiner.getTrail(), is(emptyIterable()));

}

The second test asserts only one thing more than the initial test: If the combiner is given empty commit queues (“branches”) instead of none like in the first test, it still returns an empty result collection (the commit “trail”).

With the single-facet approach, we can only change our tested scenario in one “domain dimension” and only the smallest possible amount of it. So we formulate a test that still uses one branch only, but with one commit in it:

@Test

public void branchWithCommitResultsInEqualTrail() throws Exception {

Commit commitA1 = commitAt(10L);

ProjectBranch branchA = branchFor(commitA1);

CommitCombiner combiner = new CommitCombiner(branchA);

assertThat(combiner.getTrail(), Matchers.contains(commitA1));

}

With this test, there was the first meaningful appearance of production code. We kept it very simple and trusted our future tests to guide the way to a more complex version.

The next test introduces the central piece of domain knowledge to the production code, just by changing the amount of commits on the only given branch from “one” to “many” (three):

@Test

public void branchWithCommitsAreReturnedInOrder() throws Exception {

Commit commitA1 = commitAt(10L);

Commit commitA2 = commitAt(20L);

Commit commitA3 = commitAt(30L);

ProjectBranch branchA = branchFor(commitA3, commitA2, commitA1);

CommitCombiner combiner = new CommitCombiner(branchA);

assertThat(combiner.getTrail(), Matchers.contains(commitA3, commitA2, commitA1));

}

Notice how this requires the production code to come up with the notion of comparable commit dates that needs to be ordered. We haven’t even introduced a second branch into the scenario yet but are already asserting that the topmost mission critical functionality works: commit ordering.

Now we need to advance to another requirement: The ability to combine branches. But whatever we develop in the future, it can never break the most important aspect of our implementation.

@Test

public void twoBranchesWithOnlyOneCommit() throws Exception {

Commit commitA1 = commitAt(10L);

ProjectBranch branchA = branchFor(commitA1);

ProjectBranch branchB = branchFor();

CommitCombiner combiner = new CommitCombiner(branchA, branchB);

assertThat(combiner.getTrail(), Matchers.contains(commitA1));

}

You might say that we knew about this behaviour of the production code before, when we added the test named “branchWithCommitResultsInEqualTrail”, but it really is the assurance that things don’t change just because the amount of branches changes.

Our production code had no need to advance as far as we could already anticipate, so there is the need for another test dealing with multiple branches:

@Test

public void allBranchesAreUsed() throws Exception {

Commit commitA1 = commitAt(10L);

ProjectBranch branchA = branchFor(commitA1);

ProjectBranch branchB = branchFor();

CommitCombiner combiner = new CommitCombiner(branchB, branchA);

assertThat(combiner.getTrail(), Matchers.contains(commitA1));

}

Note that the only thing that’s different is the order in which the branches are given to the CommitCombiner. With this simple test, there needs to be some important improvements in the production code. Try it for yourself to see the effect!

Finally, it is time to formulate a test that brings the two facets of our algorithm together. We tested the facets separately for so long now that this test feels like the first “real” test, asserting a “real” use case:

@Test

public void twoBranchesWithOneCommitEach() throws Exception {

Commit commitA1 = commitAt(10L);

Commit commitB1 = commitAt(20L);

ProjectBranch branchA = branchFor(commitA1);

ProjectBranch branchB = branchFor(commitB1);

CommitCombiner combiner = new CommitCombiner(branchA, branchB);

assertThat(combiner.getTrail(), Matchers.contains(commitB1, commitA1));

}

If you compare this “full” test case to the third test case in my first approach, you’ll see that it lacks all the mingled complexity of the first try. The test can be clear and concise in its scenario because it can rely on the assurances of the previous tests. The third test in the first approach couldn’t rely on any meaningful single-faceted “support” test. That’s the main difference! This is my error in the first approach: Trying to cramp more than one new facet in the next test, even putting all required facets in there at once. No wonder that the production code needed “everything” when the test requires it. No wonder there’s no guidance from the tests when I wanted to reach all my goals at once. Decomposing the problem at hand into independent “features” or facets is the most essential step to learn in order to advance from Test First to Test Driven Development. Finding a suitable “dramatic composition” for the tests is another important ability, but it can only be applied after the decomposition is done.

But wait, there is a fourth test in my first approach that needs to be tested here, too:

@Test

public void twoBranchesWithCommitsAtSameTime() throws Exception {

Commit commitA1 = commitAt(10L);

Commit commitB1 = commitAt(10L);

ProjectBranch branchA = branchFor(commitA1);

ProjectBranch branchB = branchFor(commitB1);

CommitCombiner combiner = new CommitCombiner(branchA, branchB);

assertThat(combiner.getTrail(), Matchers.contains(commitA1, commitB1));

}

Thankfully, the implementation already provided this feature. We are done! And in this moment, my ego showed up again: “That implementation is an insult to my developer honour!” I shouted. Keep in mind that I just threw away a beautiful 130-lines piece of algorithm for this alternate implementation:

public class CommitCombiner {

private final ProjectBranch[] branches;

public CommitCombiner(ProjectBranch... branches) {

this.branches = branches;

}

public Iterable<Commit> getTrail() {

final List<Commit> result = new ArrayList<>();

for (ProjectBranch each : this.branches) {

CollectionUtil.addAll(result, each.commits());

}

return sortedWithBranchOrderPreserved(result);

}

private Iterable<Commit> sortedWithBranchOrderPreserved(List<Commit> result) {

Collections.sort(result, antichronologically());

return result;

}

private <D extends Dated> Comparator<D> antichronologically() {

return new Comparator<D>() {

@Override

public int compare(D o1, D o2) {

return o2.getDate().compareTo(o1.getDate());

}

};

}

}

The final and complete second implementation, guided to by the tests, is merely six lines of active code with some boiler-plate! Well, what did I expect? TDD doesn’t lead to particularly elegant solutions, it leads to the simplest thing that could possibly work and assures you that it will work in the realm of your specification. There’s no place for the programmer’s ego between these lines and that’s a good thing.

Conclusion

Thank you for reading until here! I’ve learnt an important lesson that day (thank you, Jens!). And being able to pinpoint the main hindrance on my way to fully embracing TDD enabled me to further improve my skills even on my own. It felt like opening an ever-closed door for the first time. I hope you’ve extracted some insights from this write-up, too. Feel free to share them!