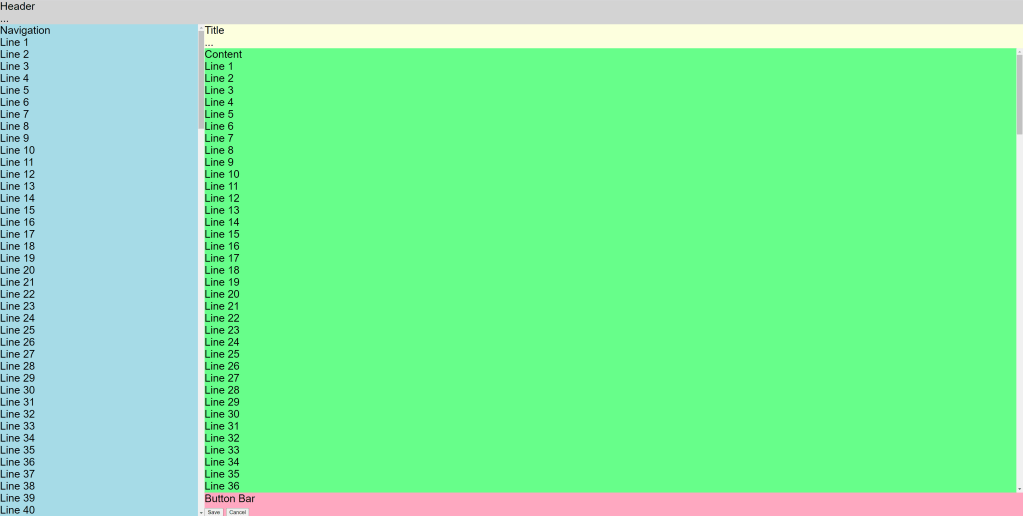

I recently faced the problem that I have a settings page that consists of different views. The first one contains general settings, like a device count, which can have an effect on the following pages. For example, I want all set devices in a ComboBox to be selectable without reloading the page.

But how can the other views be informed that the setting has changed?

Property Change event

Maybe you already know the property changed event from INotifyPropertyChanged. This allows such changes to be communicated within the View/ViewModel. Here is an example of the property change event.

public class GeneralViewModel : INotifyPropertyChanged

{

public event PropertyChangedEventHandler PropertyChanged;

private int deviceCount;

private void OnPropertyChanged([CallerMemberName] string propertyName = "")

{

PropertyChanged?.Invoke(this, new PropertyChangedEventArgs(propertyName));

}

public int DeviceCount

{

get { return deviceCount; }

set

{

deviceCount = value;

OnPropertyChanged(nameof(DeviceCount));

}

}

}

If you want to react to such an event you can add a method called when the event is fired.

PropertyChanged += DoSomething;

private void DoSomething(object sender, PropertyChangedEventArgs e)

{

if (e.PropertyName != "DeviceCount")

return;

// do something

}

But other views do not know the event and accordingly cannot react to it.

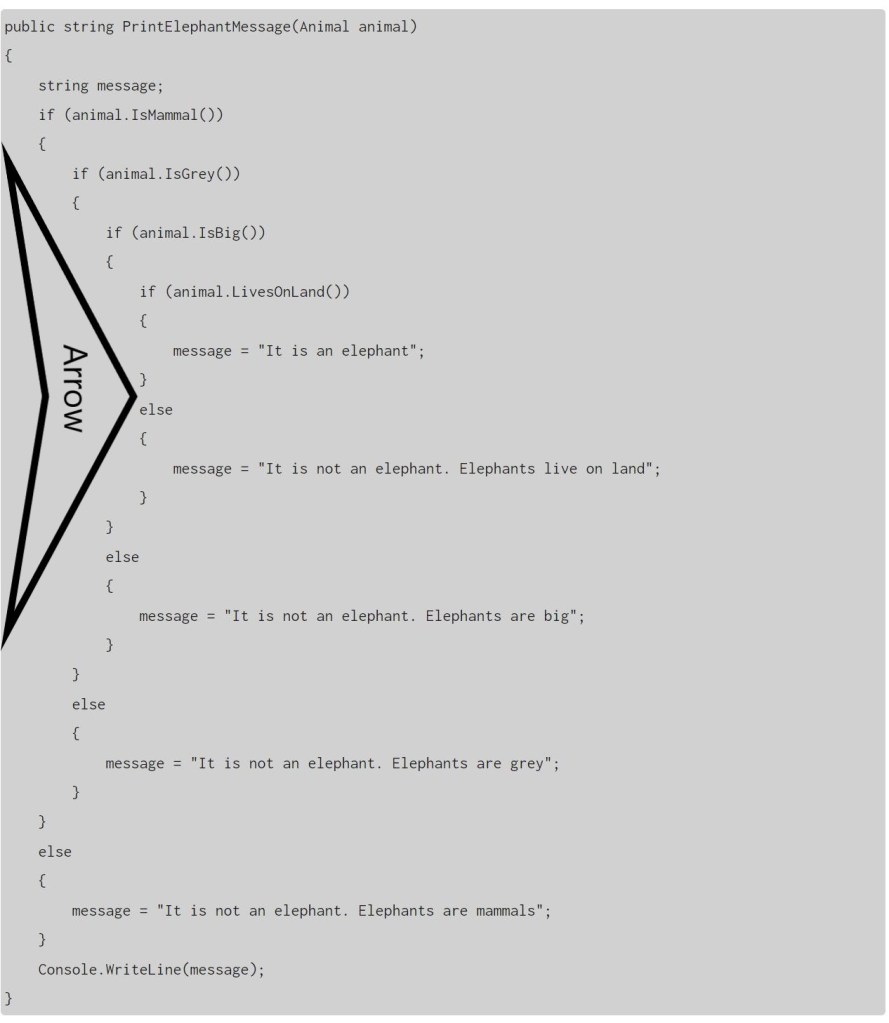

React in another ViewModel

Another ViewModel who is interested in this property can be informed when the GeneralViewModel given to it. For example, over a setup function is called by creating. In my example the other view wants a list of all devices and has to change if the device count changes. So I give the GeneralViewModel to it, and it can add an own method that react on the property change event.

public class DeviceSelectorViewModel : INotifyPropertyChanged

{

public event PropertyChangedEventHandler PropertyChanged;

private List<string> deviceItems;

public void Setup(GeneralViewModel general)

{

general.PropertyChanged += General_PropertyChanged;

GenerateNewItems(general.DeviceCount);

}

public List<string> DeviceItems

{

get { return deviceItems; }

}

private void General_PropertyChanged(object sender, PropertyChangedEventArgs e)

{

if (e.PropertyName != "DeviceCount")

return;

var general = (GeneralViewModel)sender;

GenerateNewItems(general.DeviceCount);

}

private void GenerateNewItems(int deviceCount)

{

List<string> list = new();

for(int i = 1; i <= deviceCount; i++)

{

list.Add($"Device #{i}");

}

deviceItems = list;

OnPropertyChanged(nameof(DeviceItems));

}

private void OnPropertyChanged([CallerMemberName] string propertyName = "")

{

PropertyChanged?.Invoke(this, new PropertyChangedEventArgs(propertyName));

}

}

When the event is thrown, the list is regenerated and the interface is notified of the change via its own PropertyChanged event.

Conclusion

The PropertyChanged event is a good way to inform about changed values. If you make it known to other views, the whole application can react to the change of a setting. Without reloading for the user.