Every developer has personal formatting preferences.

Brace placement, line wrapping, imports, tabs vs. spaces — everybody has an opinion, and most of them are reasonable.

The problem starts when all these styles meet in one repository.

The cost of “personal style”

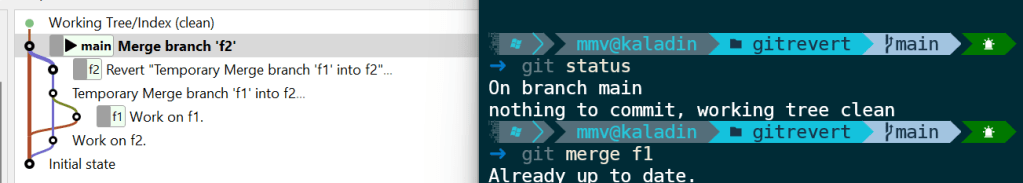

A codebase written by ten developers can easily look like ten different applications stitched together. Suddenly, pull requests are full of formatting changes. Git diffs become noisy. Merge conflicts appear because one developer reformatted a file differently than another. Code reviews drift into discussions about whitespaces instead of actual functionality.

Even worse: inconsistent code slows down reading.

Humans recognize patterns quickly. When code follows the same visual structure everywhere, the brain spends less effort parsing syntax and more effort understanding intent.

Consistent formatting reduces cognitive load.

A shared style is less about aesthetics and more about reducing friction. But how to solve this problem?

Shared Project Style

In IDEs like IntelliJ, you can define a code style and automatically reformat code according to those rules. This helps you keep your own code consistent. However, if every developer uses a different style, it does not help the project as a whole.

You can configure the style under:

Settings -> Editor -> Code Style

and save it as a project-level configuration. IntelliJ will then create a codeStyles folder with XML files inside the .idea directory.

The solution for sharing one configuration across the whole project is to commit these files to Git. This way, every developer working on the project uses the same code style configuration.

The IDE can then help enforce the agreed style by reformatting code before commit or even automatically on save.

Consistency beats preference

The important thing is not finding the perfect style. The important thing is agreeing on one.

A consistent codebase is easier to read, easier to review, and easier to maintain. Pull requests become smaller and cleaner because they contain actual changes instead of formatting noise.

Good formatting should be boring and automatic. That leaves more time for discussions that actually matter.