Today, we celebrated our third Open Source Love Day (OSLD). It was slightly degraded by a company-wide illness outbreak, but we tried to make the best out of the situation.

Open Source Love Days are our way to show our appreciation and care to the Open Source software ecosystem. Our work wouldn’t be as fun or just not possible without professional Open Source software. You can read more about our motivation and specifica in our first OSLD blog posting.

You can participate at our OSLD by using the features we’ve built today:

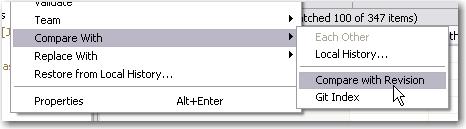

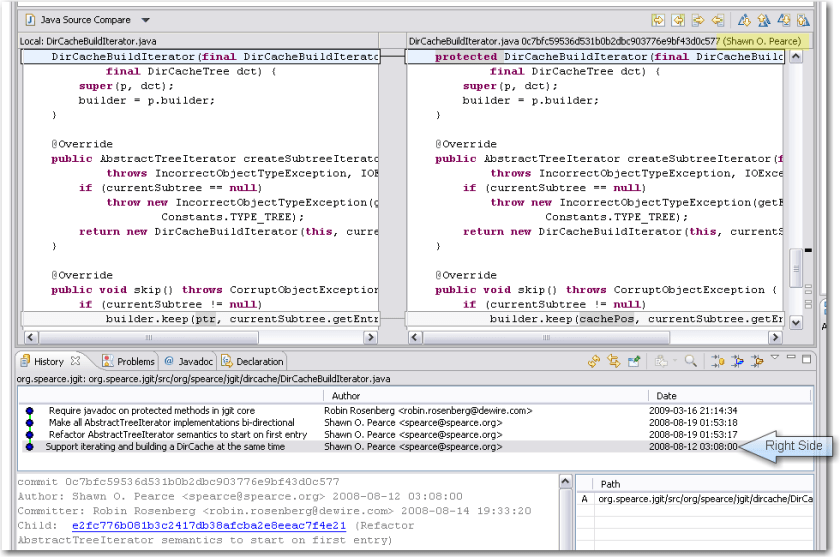

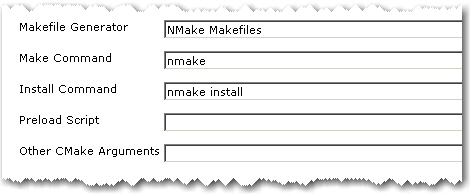

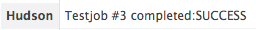

- We think git is an enrichment to the SCM market, as is Eclipse to the IDE market. Improving the quality of Eclipse’s git plugin is the next logical step. The ability to diff the content of two revisions in EGit was committed today. As a bonus, the name of the committer shows up in the right manner, too. See the screenshots to get the idea:

When developing EGit, we were already using it to pull the sources. Unfortunately, the repository URL changed bigtimes since our checkout without us noticing. This got us into trouble trying to follow the contributor guide. The command line version of git isn’t that communicative yet. But after all, this is a great time to learn about the real world problems when using git. The EGit contributor guide itself is a fantastic way for a project to show initial appreciation to volunteer efforts. Thanks for caring, guys! If you are interested to review our changes yourself, fetch the patch.

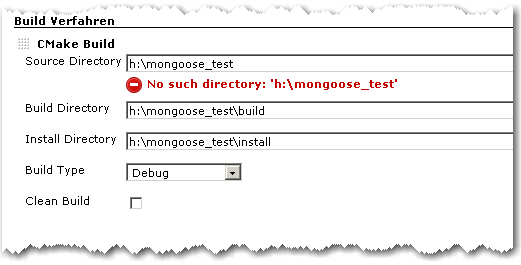

- Another part of today’s work was on the KDevelop project. We tried to fix some outstanding little features or bugs, whatever is on the list of KDevelop 4. But we spent our day fixing our development machines instead. The Ubuntu linux operating system (8.10) was way too old to get useful results and KDE needs to be up-to-date to develop KDevelop. Besides our sluggishness to keep our virtual machines on the bleeding edge, the checkout experience of KDevelop was rather sleek. What bothers us a bit is the ominous entanglement between KDevelop and KDE. It seems you can’t have one without the other and need to master both to make a stand.

- As a third part, we wanted to contribute to the TANGO project (not the useful icon collection, but the useful control system). They migrated their main repository from CVS to SVN lately, but the migration seems unfinished still. At least, the migration effort lacks public documentation for the occasional contributor. That’s a real showstopper, because you never get beyond the very first step: setting up a working project. We won’t give up and email the project leads on this topic, but it didn’t fit into this OSLD.

What were our lessons learnt today?

- Just having a possibility to view or download the source code doesn’t make an Open Source project. The key to success is the ability of complete strangers to hop in and perform useful work. Having terse, but accurate documentation helps a lot. The EGit contributor guide is a good example of a single document that makes the difference. If you own an open source project and want to attract occassional contributors (like us), write such a document and watch us (and others) drop you a patch. That said, we come to belief that the person that writes technical documentation for the developers is one of the most important roles on a project. Perhaps we join some projects in the future to fill that role.

To sum it up, this OSLD was limited from the beginning by developer availibility. With lacking documentation, we nearly grinded to a halt. We look forward to our next OSLD in December.