Ever since the year 2000 (or Y2K), software developers dread the start of a new year. You’ll never know which arbitrary limit will affect the fitness of your projects. Sometimes, it isn’t even the new year (see the year 2038 problem that will manifest itself in late January). But more often than not, the first day of a new year is a risky time.

Welcome, 2022!

The year 2022 started with Microsoft Exchange quarantining lots of e-mails for no apparent reason other than it is no longer 2021. I was amused about this “other people’s problem” until my phone rang.

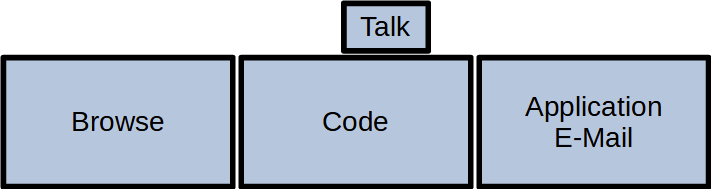

A customer reported that one of my applications doesn’t start anymore, when it ran perfectly a few days ago – in 2021. My mind began to race:

The application in question wasn’t updated recently. It has to be something in the code that parses a current date with an unfortunate date/time format. My search for all format strings (my search term was “MMddHH” without the quotes) in the application source code brought some expected instances like “yyyyMMddHHmmss” and one of a very suspicious kind: “yyMMddHHmm”.

The place where this suspicious format was used took a version information file and reported a version number, some other data and a build number. The build number was defined as an integer (32 bit). Let me explain why this could be a problem:

2G should be enough for everyone!

A 32-bit integer has an arbitrary value limit of 231=2.147.483.648. If you represent the last minute of 2021 in the format above, you get 2.112.312.359 which is beneath the limit, but quite close.

If you add one minute and count up the year, you’ll be at 2.201.010.000 which is clearly above the value limit and result in either an integer overflow ending in a very negative number or an arithmetic exception.

In my case, it was the arithmetic exception which halted the program in its very first steps while figuring out what, where and when it is.

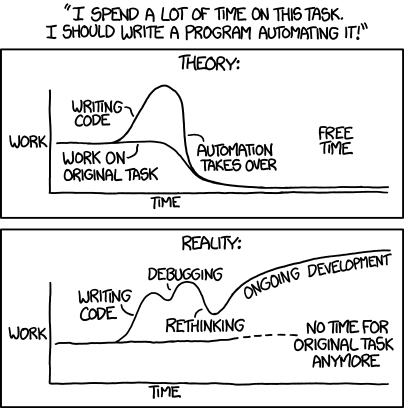

This is a rookie mistake that can only be explained by “it evolved that way”. The mistake is in the source code since the year 2004. I wrote it myself, so it is my mistake. But I didn’t just think about a weird date format that won’t spark joy 18 years later. I started with a build number from continuous integration. The first build of the project is “build 1”, the next is “build 2”, and so on. You really have to commit early, commit often (and trigger builds) to reach the integer limit that way. This is true for a linear series of builds. But what if you decide to use feature branches? The branches can happen in parallel and each have their own build number series. So “build 17” could be the 17th build of your main branch and go in production or it could be a fleeting build result on a feature branch that gets merged and deleted a few days later. If you want to use the build number as a chronological ordering, perhaps to look for updates, you cannot rely on the CI build numbering. Why not use time for your chronological ordering?

Time as an integer

And how do you capture time in an integer? You invent a clever format that captures the essence of “now” in a string that can be parsed as an integer. The infamous “yyMMddHHmm” is born. The year 2022 is a long time down the road if you apply a quick and clever fix in 2004.

But why did the application crash in 2022 without any update? The build number had to be from 2021 and would still pass the conversion. Well, it turned out that this specific application had no build number set, because we changed our build system and deemed this information not important for this application. So the string in the version file was empty. How is an empty string interpreted as today?

Well, there was another clever code by another developer from 2008 that took a string being null or empty and replaced it with the current date/time. The commit message says “Quickfix for new version format”.

Combined cleverness

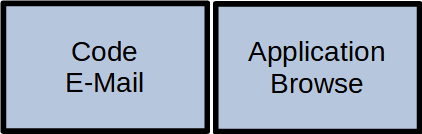

Combine these three things and you have the perfect timebomb:

- A clever way to store a date/time as an integer

- A clever way to intepret missing settings

- A lazy way to intriduce a new build process

The problem described above was present in a total of five applications. Four applications had fixed build numbers/dates and would have broken with the next version in 2022 or later. The fifth application had an empty build number and failed exactly as programmed after the 01.01.2022.

Lessons learnt

What can we learn from this incident?

First: clever code or a quick fix is always a bad idea.

Second: cleverness doesn’t stack. One clever workaround can neutralize another clever hack even if both “solutions” would work on their own.

Third: If your solution relies on a certain limit to never be reached, it is only a temporary solution. The limit will be reached eventually. At least leave an automated test that warns about this restriction.

Fourth: Don’t mitigate a hack with another hack. You only make your situation worse in the long run.

The fourth take-away is important. You could fix the problem described above in at least two ways:

- Replace the integer with a long (64 bit) and hope that your software isn’t in production anymore when the long wraps around. Replace the date/time format with the usual “yyyyMMddHHmmss”.

- Leave the integer in place and change the date/time format to “yyDDDHHmm” with “DDD” being the day of the year. With this approach, you shorten the string by one digit and keep it below the limit. You also make the build number even less readable and leave a timebomb for the year 2100.

You can probably guess which route I took, even if it was a lot more work than expected. The next blog entry about this particular code can be expected at 01.01.10000.