This might be the start of a new blog post series about building blocks for an effective business IT landscape.

We are a small company that strives for a high level of automation and traceability, the latter often implemented in the form of documentation. This has the amusing effect that we often automate the creation of documentation or at least the creation of reports. For a company of less than ten people working mostly in software development, we have lots of little services and software tools that perform tasks for us. In fact, we work with 53 different internal projects (this is what the blog post series could cover).

Helpful spirits

Some of them are rather voluminous or at least too big to replace easily. Others are just a few lines of script code that perform one particular task and could be completely rewritten in less than an hour.

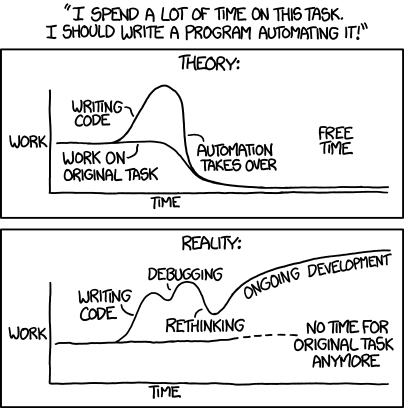

They all share one goal: To make common or tedious tasks that we have to do regularly easier, faster, less error-prone or just more enjoyable. And we discover new possibilities for additional services everywhere, once we’ve learnt how to reflect on our work in this regard.

Let me take you through the motions of discovering and developing such a “basic business service” with a recent example.

A fateful friday

The work that led to the discovery started abrupt on Friday, 10th December 2021, when a zero-day vulnerability with the number CVE-2021-44228 was publicly disclosed. It had a severity rating of 10 (on a scale from 0 to, well, 10) and was promptly nicknamed “Log4Shell”. From one minute to the next, we had to scan all of our customer projects, our internal projects and products that we use, evaluate the risk and decide on actions that could mean disabling a system in live usage until the problem is properly understood and fixed.

Because we don’t only perform work but also document it (remember the traceability!), we created a spreadsheet with all of our projects and a criteria matrix to decide which projects needed our attention the most and what actions to take. An example of this process would look like this:

- Project A: Is the project at least in parts programmed in java? No -> No attention required

- Project B: Is the project at least in parts programmed in java? Yes -> Is log4j used in this project? Yes -> Is the log4j version affected by the vulnerability? No -> No immediate attention required

Our information situation changed from hour to hour as the whole world did two things in parallel: The white hats gathered information about possible breaches and not affected versions while the black hats tried to find and exploit vulnerable systems. This process happened so fast that we found ourselves lagging behind because we couldn’t effectively triage all of our projects.

One bottleneck was the creation of the spreadsheet. Even just the process of compiling a list of all projects and ruling out the ones that are obviously not affected by the problem was time-consuming and not easily distributable.

Post mortem

After the dust settled, we had switched off one project (which turned out to be not vulnerable on closer inspection) and confirmed that all other projects (and products) weren’t affected. We fended off one of the scariest vulnerabilities in recent times with barely a scratch. We could celebrate our success!

But as happy as we were, the post mortem of our approach revealed a weak point in our ability to quickly create spreadsheets about typical business/domain entities for our company, like project repositories. If we could automate this job, we would have had a complete list of all projects in a few seconds and could have worked from there.

This was the birth hour of our list generator tool (we called it “sunzu” because – well, that would require the explanation of a german word play). It is a simple tool: You press a button, the tool generates a new page with a giant table in the wiki and forwards you to it. Now you can work with that table, remove columns you don’t need, add additional ones that are helpful for your mission and fill out the cells that are empty. But the first step, a complete list of all entities with hyperlinks to their details, is a no-effort task from now on.

No-effort chores

If Log4Shell would happen today, we would still have to scan all projects and decide for each. We would still have to document our evaluation results and our decisions. But we would start with a list of all projects, a column that lists their programming languages and other data. We would be certain that the list is complete. We would be certain that the information is up-to-date and accurate. We would start with the actual work and not with the preparation for it. The precious minutes at the beginning of a time-critical task would be available and not bound to infrastructure setup.

Since the list generator tool can generate a spreadsheet of all projects, it has accumulated additional entities that can be listed in our company. For some, it was easy to collect the data. Others require more effort. There are some that don’t justify the investment (yet). But it had another effect: It is a central place for “list desires”. Any time we create a list manually now, we pose the important question: Can this list be generated automatically?

Basic business building blocks

In conclusion, our “sunzu” list generator is a basic business service that might be valueable for every organization. Its only purpose is to create elaborate spreadsheets about the most important business entities and present them in an editable manner. If the spreadsheet is created as an Excel file, as an editable website like tabble or a wiki page like in our case is secondary.

The crucial effect is that you can think “hmm, I need a list of these things that are important to me right now” and just press a button to get it.

Sunzu is a web service written in Python, with a total of less than 400 lines of code. It could probably be rewritten from scratch on one focussed workday. If you work in an organization that relies on lists or spreadsheets (and which organization doesn’t?), think about which data sources you tap into to collect the lists. If a human can do it, you can probably teach it to a computer.

What are entities/things in your domain or organization that you would like to have a complete list/spreadsheet generated generated automatically about? Tell us in the comments!