This is the first part of a series on how to boost your build box without much effort. This episode talks about the effects of different harddisks.

W e actively use Hudson as our continuous integration server software. It has a nice little feature called “build history trend” that shows the duration of all archived builds. One of our major projects started out small and fast with a build duration of 01:20 minutes. One and a half year later, it reached for the 04:00 minute hurdle. It wasn’t a surprise to us, as the build has more than four times the work now and the hardware staid the same.

e actively use Hudson as our continuous integration server software. It has a nice little feature called “build history trend” that shows the duration of all archived builds. One of our major projects started out small and fast with a build duration of 01:20 minutes. One and a half year later, it reached for the 04:00 minute hurdle. It wasn’t a surprise to us, as the build has more than four times the work now and the hardware staid the same.

But a question emerged: How can we speed up our build?

Applying optimization: The basic maths

We did a quick review of our ant build scripts to ensure there’s nothing fundamentally wrong with them and then decided which road to follow first: Optimizing the build scripts or boosting the hardware? There is only one pragmatic answer to us: boost the hardware as long as it stays reasonable in price. Every optimization in the build script would need its time (which isn’t cheap) and possibly increase the script complexity (which is very expensive later on).

Optimizing the hardware

So we went on the journey to make a fast buildbox even faster. We started out with a dual core processor (2.6 GHz), a decent-but-standard harddisk and 4 GB of memory. We replaced every part on its own to see the effect. The journey includes:

Our goal is to cut our build time down by 50 percent, to a little less than 02:00 minutes. We don’t want to spend more than 500 EUR for new hardware. So now, after this introduction:

Part I: Replacing the harddisk

Our buildbox starts with a more or less normal harddisk (0.5 TB), certified for continuous usage. We could have bought just another normal harddisk of a newer generation, but that doesn’t cut it in our experience (we didn’t verify specifically, though).

Calling the carnivores

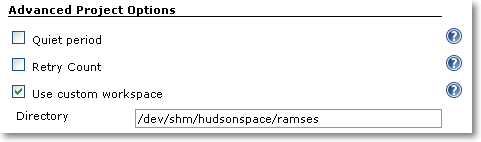

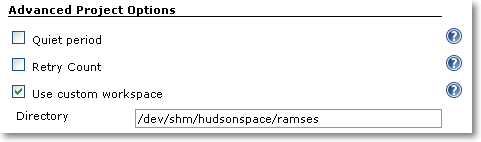

If you need to upgrade your harddisk, you can buy yourself a VelociRaptor drive and be pretty much assured that you’ll notice the difference. We had pleasant experiences with this kind of fast-spinning drives before, but this time, we wanted to go a step further and try a fast Solid State Disk (SSD). As you only need to relocate the working directories (called workspaces in hudson terminology) of your projects to the new disk, the capacity isn’t important as long as it’s greater than your project sizes. You can just plug your new disk in the buildbox and format it with a high performance file system. As our buildbox runs on Linux, relocating the workspace is just setting a symbolic link. You do not even tell hudson about it. If you happen to run on Windows, check out the “use custom workspace” setting on your job’s configuration page.

An investment of about 200 EUR and 15 minutes of installation later, we had the result: The build average before was 03:30 minutes and now 03:10 minutes. That’s not a big leap forward, as others found out, too. It’s not that the SSD was bad, it performed exceptionally well in the benchmarks, but the harddisk wasn’t the bottleneck. To further proof our assumption, we installed the fastest harddrive you can get: the RAM disk.

Only pretend to use the disk

Linux (like other unixoid systems) has the great feature of an emulated harddisk right in your memory. On Debian/Ubuntu systems, this emulated drive is mounted at /dev/shm and has a capacity of half your total physical memory. It grows dynamically, so you don’t have to worry about its initial size. But you have to check if your workspace fits into it. Our buildbox had 4 GB of RAM and 2 GB were enough to contain the hudson workspace. We configured hudson to build there (you can use symbolic links or the “custom workspace” setting as shown in the picture) and got the result: The build average went down to 02:50 minutes.

Review on the results

That’s as far as we could speed up our buildbox by just replacing the harddisk. Down from 03:30 minutes to 02:50 minutes, a reduction of 40 seconds or 20 percent. In fact, we even cheated as the buildbox doesn’t use an harddisk anymore for building. With Linux, it’s incredibly easy to utilize a RAM disk as long as you have enough RAM to loan. For Windows systems, there are several software products that can do the same. If you don’t want to loan your RAM, you can look into HyperDrives, but for a price!

So we conclude that the fastest harddisk is an emulated one and even then, its effect on the build time is limited.

Stay tuned for the next episode of our journey to a faster buildbox, when we apply a faster CPU.