In the first and second part of our effort to speed up our buildbox, we replaced the harddisk with a RAM disk and swapped in a bigger CPU. This brought the build time down from 03:30 minutes to 02:00 minutes.

In the first and second part of our effort to speed up our buildbox, we replaced the harddisk with a RAM disk and swapped in a bigger CPU. This brought the build time down from 03:30 minutes to 02:00 minutes.

Boosting the memory

When we began the journey, we wanted to undercut the 02:00 minutes threshold. The last component that directly impacts performance of our box was the memory. We started out with 4 GB of DDR2-800 modules. To have a feeling for the effects, we upgraded to 4 GB of DDR2-1066 first and then added another 4 GB, resulting in 8 GB of RAM. We expected the performance gain to be small, but noticeable. The RAM disk, for example, is directly affected by memory speed.

As much, but faster

The first upgrade brought the first surprise: Upgrading from DDR2-800 to DDR2-1066 modules didn’t change anything. It’s not that the mainboard or CPU doesn’t support the faster RAM, it just seems to be fast enough, despite the data bus clock rate. Our build process still took 02:00 minutes, reproducible and without exception.

Filling all the banks

The mainboard can load up to 16 GB of RAM, but our budget just allowed to buy 8 GB of DDR2-1066 RAM. We installed it and ran the same 32 bit Ubuntu Linux as before. The build process took 02:00 minutes, which was expected now.

Changing to 64bit

We changed to boot harddisk, installed a 64 bit Ubuntu Linux and ran the build again. Still 02:00 minutes. The switch to 64 bit wasn’t a big deal with Java, but some of the included native libraries complained about the change. Recompiling them solved the issue.

Finally reaching the target

As a last measure, we increased the maximum memory of the build JVM to the biggest value it would accept. This was -Xmx2600m, a surplus of 600 MB to the original setting. This sped up the build process by five seconds, it took 01:55 minutes now.

Conclusion and perspective

We’ve reached our anticipated target of less than two minutes build time. We exceeded our original budget of 500 EUR, but bought some parts that finally weren’t used in the build box, but elsewhere. The two parts that made the whole difference were the CPU and some more memory to spend it on the RAM disk.

If you want to speed up your single build box, aim for the CPU/RAM combo and try to install a RAM disk to perform all the work on.

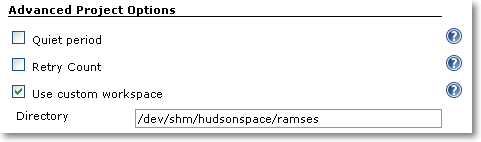

This leads me to the perspective of the next part of the series: If you plugged in the most expensive CPU and enormous amounts of RAM to speed up your buildbox, you still aren’t done. You should invest some time to look into distributed builds. Hudson as our continuous integration server provides nearly instant “build slave” support. With this feature, you can set up a whole build farm to further increase your build throughput.

Stay tuned for “Part IV: Beyond the box”