Writing software in Python often is a pleasure and can lead to great products with limited costs because of its expressiveness and rich ecosystem.

One area where imho Python falls a bit short is deployment and packaging. On Linux many users and customers expect packages for their platform so they can manage the software installation and updates using the standard tools.

This is where the pain often starts. Depending on the dependencies of your python project it may be simple or rather hard to provide a decent experience for the people managing your software.

I want to present several ways of providing a decent deployment experience to your customer specifically for Debian-based linux distributions.

The simple case

If all the dependencies of your project are available in usable versions for the target distribution, it is quite easy to package a python project as a .deb. My preferred way is to just use stdeb like below:

python3 -m build --sdist --no-isolation

py2dsc-deb --with-python3=True --debian-version 1 ./dist/my_project.tar.gz

This will built a simple debian package installable on a matching destination platform. For simple cases this often is enough.

If only one or a few dependencies are missing, you could consider packaging them too using this approach and allowing your project to take this same route.

Not using packages at all

If some dependencies are not available on the target platform through Debian packages it may be easiest to just provide a tarball with an installation script. This script would essentially perform the following steps

- Unpack the source to a nice destination directory

- Create a venv there

- Install the dependencies in the venv

- Provide some startscript and/or service definition to launch the software using the venv

This is simple and usually scales to bigger projects but does not provide nice and clean integration into the system tools. Administrators have to manage the software this way and not the package manager way they may expect and be comfortable with.

A hybrid Debian package approach

My hybrid approach is a blend of the two above:

It builds a normal debian package containing the project itself along with version and dependency metadata. In the postinst-script of the package however, it creates a venv and installs the dependencies unavailable or unusable (e.g. wrong version) on the target platform.

First we create the debian packaging files using

python3 setup.py sdist

dh_make -p my-project_1.0.0 -f dist/my-project-1.0.0.tar.gz

This creates a debian/ directory containing all the packaging metadata files. You should mainly edit the control, copyright and changelog files and then craft the postinst file for our hybrid packaging approach:

#!/bin/sh

set -e

case "$1" in

configure)

python3 -m venv /opt/my-project/venv

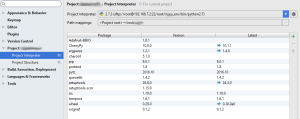

. /opt/my-project/venv/bin/activate && pip install PyQt5 pytango==9.5.1 taurus pyepics

;;

abort-upgrade|abort-remove|abort-deconfigure)

;;

*)

echo "postinst called with unknown argument '$1'" >&2

exit 1

;;

esac

exit 0

For correct removal we need a modified postrm script too:

#!/bin/sh

set -e

case "$1" in

purge|remove|upgrade|failed-upgrade|abort-install|abort-upgrade|disappear)

rm -rf /opt/my-project/venv/

;;

*)

echo "postrm called with unknown argument '$1'" >&2

exit 1

;;

esac

exit 0

Using a final dpkg-buildpackage -b -us -uc we get a debian package that builds its own venv on the target machine using the dependencies we actually need and not what the system offers.

For us and our customers this is a perfect compromise:

It allows us to define the dependencies and their versions exactly and mostly independent from what the target system offers while coming as a normal debian package managed using system tools.