We are currently posting an ongoing series on how to make your (hudson) build box faster. This article talks about making it smaller.

Making a build box as small as possible isn’t the most familiar requirement today and wasn’t for us. But when i privately bought a fit-PC2, we couldn’t resist trying it out as a hudson server.

The fit-PC2

This is a computer that really fits everywhere. In your car, on the back of your monitor or just, as in our case, on a beer mat. It’s a fully equipped PC with the specification of a standard netbook (Atom 1.6GHz CPU, 1GB RAM, 160GB HDD) and the dimensions of a 5-port Ethernet switch. The most astonishing fact about it is that it uses standard size 2,5″ notebook harddisks. For more information about the computer, look around the CompuLab website, they do not exaggerate.

This is a computer that really fits everywhere. In your car, on the back of your monitor or just, as in our case, on a beer mat. It’s a fully equipped PC with the specification of a standard netbook (Atom 1.6GHz CPU, 1GB RAM, 160GB HDD) and the dimensions of a 5-port Ethernet switch. The most astonishing fact about it is that it uses standard size 2,5″ notebook harddisks. For more information about the computer, look around the CompuLab website, they do not exaggerate.

Operating the fit-PC2

The fit-PC2 is a normal computer in every aspect. We run ours with Ubuntu linux and official Java packages from Sun. As the case is fanless, it accumulates some heat, but never over 60° Celsius (140° Fahrenheit). We measured an average temperature of 45°C on the case surface while building a large project. The Gnome desktop feels snappy, application load delays are sufficiently small and customizing the software outfit is as easy as it can get with Ubuntu.

The fit-PC2 is a normal computer in every aspect. We run ours with Ubuntu linux and official Java packages from Sun. As the case is fanless, it accumulates some heat, but never over 60° Celsius (140° Fahrenheit). We measured an average temperature of 45°C on the case surface while building a large project. The Gnome desktop feels snappy, application load delays are sufficiently small and customizing the software outfit is as easy as it can get with Ubuntu.

Setting up hudson

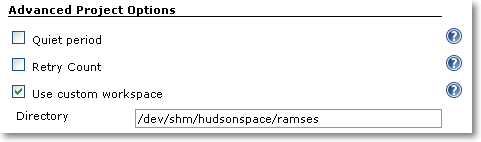

Installing the hudson continuous integration server on a Debian based linux system is a matter of three commands. See Koshuke Kawaguchi’s blog entry on that topic for details. After the automatic installation procedure, hudson already runs on port 8080 of the machine. Setting up the project’s job and initiating the first build were a matter of a few minutes. Hudson reacts swiftly to website clicks.

The world’s smallest hudson server

This is the smallest hudson instance we’ve heard of up to now. It runs in a case measuring 11,5 x 10,0 x 2,6 centimeters. The power consumption is around 8 Watt when building (including the self-usage of the measuring device itself), which would be even lower once we replace the mechanical harddisk with a solid state disk (SSD).

This is the smallest hudson instance we’ve heard of up to now. It runs in a case measuring 11,5 x 10,0 x 2,6 centimeters. The power consumption is around 8 Watt when building (including the self-usage of the measuring device itself), which would be even lower once we replace the mechanical harddisk with a solid state disk (SSD).

From the performance specifications given above, you should not expect a speed wonder. The fit-PC2 finished the project’s build within 09:50 minutes, which is dangerously near the ten-minutes mark for acceptable continuous feedback. So this box will not go into regular duty, but return home to me (remember, i bought it privately).

Conclusion

The whole purpose of this experiment was to get used to a new era of microcomputers. They are palm-sized and nearly battery operated, but fully equipped with standard components and powerful enough to perform regular tasks. The fit-PC2 is a strong instance of these devices.

Show off your hudson server

Well, to be honest, another purpose of this experiment was to show off our hudson skills, operating with hudson instances from heterogeneous slave farms to this single 300 cubic centimetres box. We would like to hear from your hudson instance. You may add a comment and/or share a link with your story. Maybe the hudson wiki is the ultimate place to gather all the stories.

P.S. This blog entry’s title is an adaption of my childhood’s favorite movie.