This blog article is a story about an idea, not an actual project report. If it were a movie, it would feature the “based on real events” disclaimer.

The warehouse

Imagine a warehouse of a medium sized company. You would expect a medium sized warehouse, but in reality, the amount of items in this warehouse is nearly as big as in a big company. The difference might be the storage count of each item, but the item count is a big number. So big that each item has its own “item ID”, which is also used as the location identifier in the warehouse. Let’s see three (contrived) examples:

- 211 725: Retaining screw, 8 mm

- 413 114: Power transformer, 5 A

- 413 115: Power transformer, 10 A

As you can see, different item groups have numbers with a large numerical distance while similar items are numerically close. This makes sense for the engineers using these numbers by muscle memory and for the warehouse navigation. If you read the first three digits, you already know where to turn to in the large hall. If you’ve arrived in the general area, the next three digits lead you to the exact storage space.

The operators

But that’s not how it works. The warehouse workers cannot read. Yes, you’ve read that right. The warehouse is operated by humans and the workers are not familiar with digits and numbers. They decipher each digit on their own and cannot cross-check with the article name. They navigate the warehouse with a best-effort approach. The difference between item 413114 and item 413115 is negligible for them. It’s the same thing anyway – unless you can read (and understand) that one of them blows up above 5 Ampere and the other one doesn’t. And this is a problem for the engineers. The difference between a “Power transformer able to take 10 Ampere” and a “Power transformer (5 A), aka molten copper lump” is a successful or a failed project.

So what can you do? Teach the warehouse workers how to read and deal with numbers? Would be a good approach if the turnover rate among them wasn’t so high. What else can we do? We can abstract the problem at hand, apply a suitable solution approach and see if it works.

The abstraction

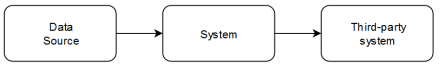

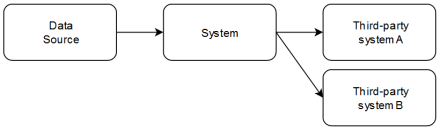

If you think about the situation in abstract terms, you deal with an unreliable data transmission. You send your item list to the warehouse and receive a collection of loosely related items. That’s similar to sending data over a faulty cable. To mitigate transmission errors, we’ve invented checksums. Each suitable part of the transmission is validated (or invalidated) by a checksum.

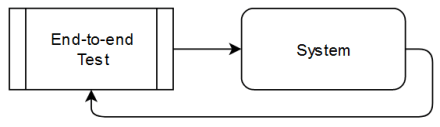

In our case, the “suitable part of the transmission” is each single item. We should add a checksum to the item list! Instead of requesting item 413114, we request 413114/7, while item 413115 is requested as 413115/1. Now, we have a clear indicator for wrong or right. But it is still an indicator in a foreign alphabet. If you ignore the difference between 4 and 5, why not also ignore the difference between 7 and 1?

The emojification

But what if we don’t rely on numbers or characters, but on something every human can understand, regardless of literacy level? What if we transpose the numbers into an emoji alphabet? Let 413114 be 😄🌵☁️🌵🌵😄 and 413115 is written as 😄🌵☁️🌵🌵🏠. But more important: The checksum is in emoji, too:

😄🌵☁️🌵🌵😄 (413114)

🚗 (7)

vs.

😄🌵☁️🌵🌵🏠 (413115)

🌵 (1)

Even if you only glance at the emoji series (and fail to notice the difference at the end), you still have to acknowledge that your checksum doesn’t fit. A cactus is no car, regardless of your literacy.

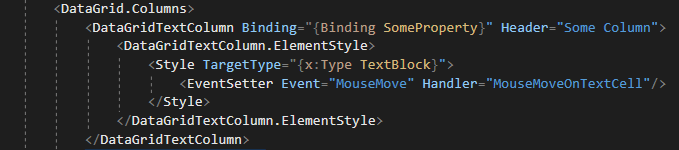

This transposition of numbers into the iconographic realm plays right into every human’s built-in ability to distinguish concrete objects. Numbers, digits and characters are (more) abstract concepts and objects, but a cloud is recognizable as a cloud even if you draw it by hand and without care. The transposition is reversable quiet easily – you only have to remember ten number/emoji pairs (or eleven, if your checksum has an extra character). And nobody stops you from printing both on the item list and warehouse storage boxes:

And the best thing? You don’t even have to invent the transposition yourself. Just use the existing work of others by checking out emojisum by Vincent Batts or ecoji by Keith Turner.

The only thing that is stopping you is that ancient dot matrix printer that prints the item lists on continuous paper.