Let me start this blog post with a disclaimer: I’m really convinced of the value of multilingual programming and also think that applying the “right tool for the job” is a good thing. But there is a fallacy around this concept in programming that i want to point out here. The fallacy doesn’t invalidate the concept, keep that in mind.

Let me start this blog post with a disclaimer: I’m really convinced of the value of multilingual programming and also think that applying the “right tool for the job” is a good thing. But there is a fallacy around this concept in programming that i want to point out here. The fallacy doesn’t invalidate the concept, keep that in mind.

Polyglot cineasts

Let me start with an odd thought: What if there was a movie, a complicated international thriller around a political intrigue, playing in over half a dozen countries. The actors of each country speak their native tongue and no subtitles are provided. Who would be able to follow the plot? Only a chosen few of really polyglot cineasts would ever appreciate the movie. Most of us wouldn’t want to see it.

Polyglot programming

Our last web application project was comprised of that half a dozen languages (Groovy, Java, HTML, CSS, HQL/SQL, Ant). We could easily include more programming languages if we feel the need to do it. Adding Clojure, Scala or Ruby/JRuby doesn’t sound absurd to us. A programmer capable of knowing and switching between numerous programming languages is called a “Polyglot Programmer“.

The main justification for heterogeneous (polyglot) projects often is the concept of “using the right tool for the job”. The job often is a subtask of the whole project, like building the project, accessing the database, implementing the ever-changing business logic. For each subtask, some other language might outshine the competitors. Besides some reasonable doubt concerning the hidden cost of this approach, there is a misconception of the term “tool”.

Programming languages aren’t tools

If you use a tool in (basic or advanced) engineering, let’s say a hammer to drive some nails into a wooden plate or a screwdriver to decompose your computer, you’ll put the tool aside as soon as “the job” is finished. The resulting product (a new wooden cabinet or a collection of circuit boards) doesn’t include the tool. Most of the times, your job is really finished, without “change requests” to the product.

If your tool happens to be a programming language, you’ll produce source code bound to the tool. Without the tool, the product isn’t working at all. If you regard your compiled binaries as “the product”, you can’t deal with “change requests”, a concept that programmers learn early and painful. The product of a programmer obviously is source code. And programming languages don’t act as tools, but as materials in this respect. Tools go away, materials stick.

Programming languages are materials

As source code is tied to its programming language, they form a conceptional union. So I suggest to change the term to “using the right material for the job” when speaking about programming languages. This is a more profound decision to make in comparision to choosing between a Phillips style or a TORX screwdriver. Materials need to outlast when the tools are long put aside.

But there are tools, too

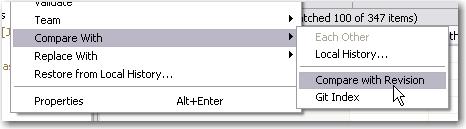

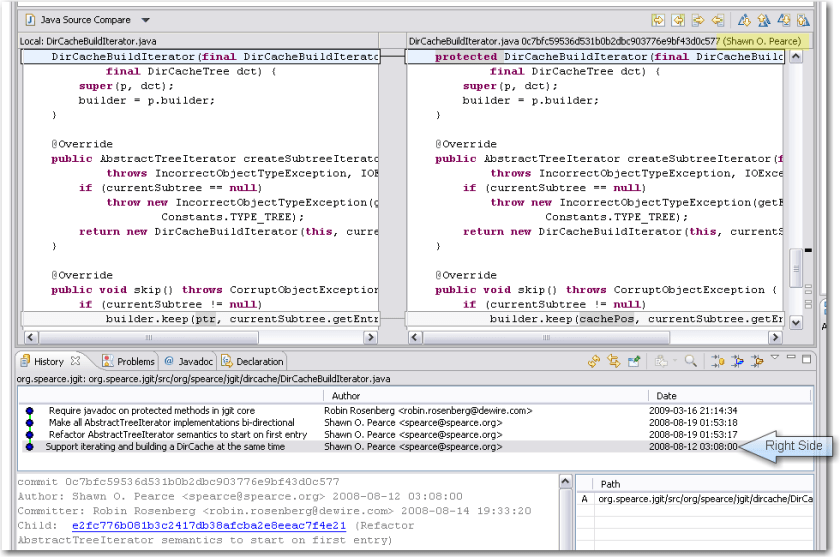

In my web application example above, we used a lot of tools. Grails is our framework of choice, Jetty our web container to deploy to, the Spring Framework provides mighty utilities and we used IDEA to bolt it all together. We could easily exchange Tomcat for Jetty or IDEA with Eclipse without changing the source code (the example doesn’t work that easy for Grails and Spring, though). Tools need to be replaceable or even disposable.

Summary

The term “the right tool for the job” cannot easily be applied to programming languages, as they aren’t tools, but materials. This is why polyglot programming is dangerous to when used heavily in a single project. It’s easy to end up with a tangled “amalgam project”.

Two more disclaimers:

- If chosen right, “composite construction” is a powerful concept that unifies the advantages of two materials instead of adding up their drawbacks.

- Being multilingual is advantageous for a programmer. Just don’t show it all off in one project.