One promise of SQL for application developers is that changing the database management system (DBMS) is not that of a big deal. Due to the many specialties and not complete standards conformance of the database vendors it can be a big task to migrate from one DBMS vendor to another.

Nevertheless, there are plenty of good reasons to do so:

- Cost of buying, running and maintaining the DBMS

- Limitations of the current DBMS like performance, tool support, character sets, naming, data types and sizes etc.

- Missing features like geospatial support, clustering, replication, sharding, timeseries support and so on

- Support or requirements on the customers or operators side

Some of our long running projects that started several years ago had the requirement to work with an Oracle DBMS, version 8i at that time. Now, more than 10 years later our customer provides and prefers to host a PostgreSQL 13 cluster. Of course she would like us to migrate our applications over to the new DBMS and eventually get rid of the Oracle installation.

Challenges for the migration

Even though PostgreSQL is supports most of SQL:2016 core and most important features of Oracle there are enough differences and subtleties that make migration non-trivial. The most obvious items to look out for are

- different column type names

- SQL features and syntactical differences (sequences!)

- PL/SQL functions syntax and features

Depending on your usage of database specific features you have to assess how much work and risk is expected.

Tools and migration process

Fortunately, there is a quite mature tool that can aid you along the process called ora2pg. It has tons of options to help you customizing the migration and a quite helpful assessment of the task ahead. The migration report looks like this:

-------------------------------------------------------------------------------

Ora2Pg v21.1 - Database Migration Report

-------------------------------------------------------------------------------

Version Oracle Database 12c Enterprise Edition Release 12.1.0.2.0

Schema NAOMI-TEST

Size 91.44 MB

-------------------------------------------------------------------------------

Object Number Invalid Estimated cost Comments Details

-------------------------------------------------------------------------------

DATABASE LINK 0 0 0.00 Database links will be exported as SQL/MED PostgreSQL's Foreign Data Wrapper (FDW) extensions using oracle_fdw.

FUNCTION 1 0 1.00 Total size of function code: 0 bytes.

GLOBAL TEMPORARY TABLE 60 0 168.00 Global temporary table are not supported by PostgreSQL and will not be exported. You will have to rewrite some application code to match the PostgreSQL temporary table behavior. ht_my_table <--- SNIP --->.

INDEX 69 0 6.90 0 index(es) are concerned by the export, others are automatically generated and will do so on PostgreSQL. Bitmap will be exported as btree_gin index(es). Domain index are exported as b-tree but commented to be edited to mainly use FTS. Cluster, bitmap join and IOT indexes will not be exported at all. Reverse indexes are not exported too, you may use a trigram-based index (see pg_trgm) or a reverse() function based index and search. Use 'varchar_pattern_ops', 'text_pattern_ops' or 'bpchar_pattern_ops' operators in your indexes to improve search with the LIKE operator respectively into varchar, text or char columns.

JOB 0 0 0.00 Job are not exported. You may set external cron job with them.

SEQUENCE 4 0 1.00 Sequences are fully supported, but all call to sequence_name.NEXTVAL or sequence_name.CURRVAL will be transformed into NEXTVAL('sequence_name') or CURRVAL('sequence_name').

SYNONYM 0 0 0.00 SYNONYMs will be exported as views. SYNONYMs do not exists with PostgreSQL but a common workaround is to use views or set the PostgreSQL search_path in your session to access object outside the current schema.

TABLE 225 0 72.00 495 check constraint(s). Total number of rows: 264690. Top 10 of tables sorted by number of rows:. topt has 52736 rows. po has 50830 rows. notification has 18911 rows. timeline_entry has 16556 rows. char_sample_types has 11400 rows. char_safety_aspects has 9488 rows. char_sample_props has 5358 rows. tech_spec has 4876 rows. mail_log_entry has 4778 rows. prop_data has 4358 rows. Top 10 of largest tables:.

-------------------------------------------------------------------------------

Total 359 0 248.90 248.90 cost migration units means approximatively 3 man-day(s). The migration unit was set to 5 minute(s)

-------------------------------------------------------------------------------

Migration level : A-3

-------------------------------------------------------------------------------

Migration levels:

A - Migration that might be run automatically

B - Migration with code rewrite and a human-days cost up to 5 days

C - Migration with code rewrite and a human-days cost above 5 days

Technical levels:

1 = trivial: no stored functions and no triggers

2 = easy: no stored functions but with triggers, no manual rewriting

3 = simple: stored functions and/or triggers, no manual rewriting

4 = manual: no stored functions but with triggers or views with code rewriting

5 = difficult: stored functions and/or triggers with code rewriting

-------------------------------------------------------------------------------

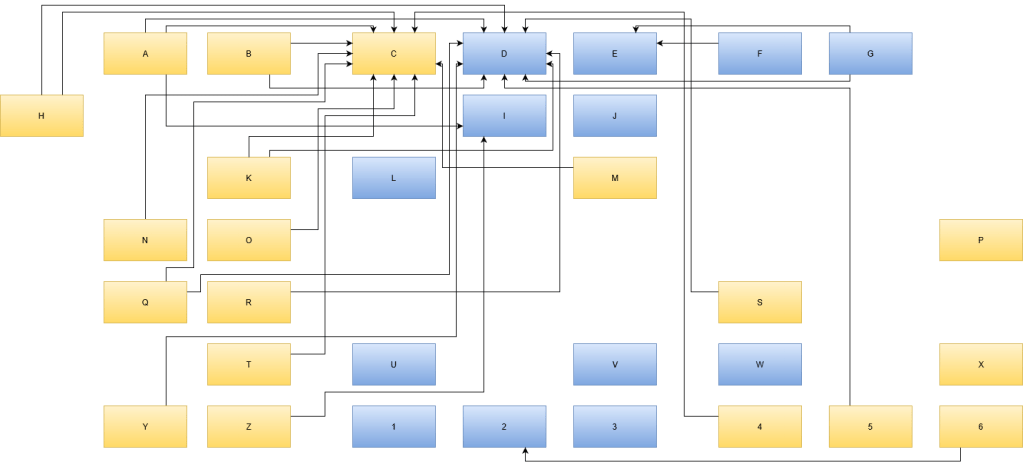

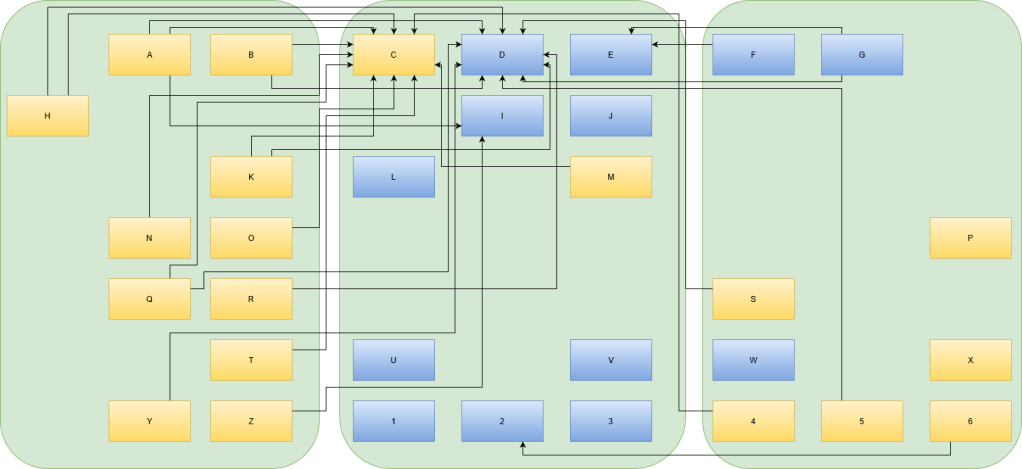

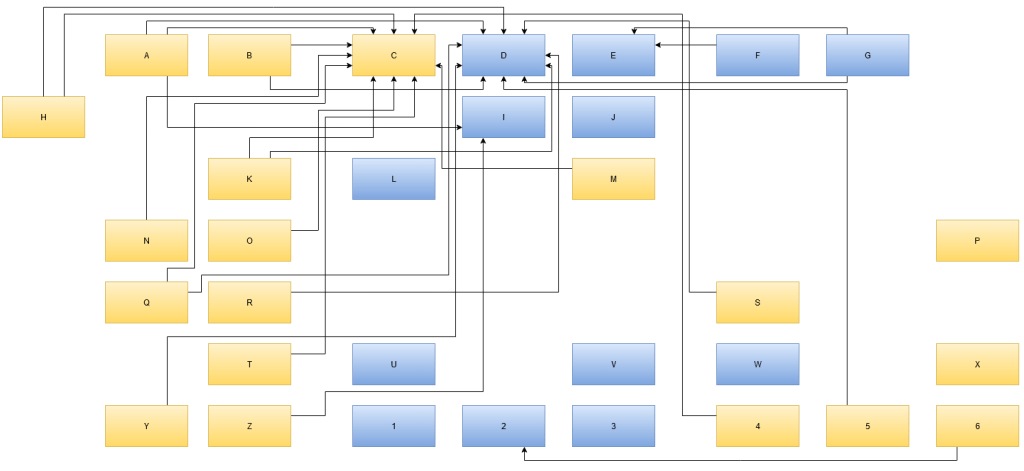

The tool is written in Perl, so I decided to put and run it inside Docker containers because I did not want to mess with my working machine or some VMs. To have quick turnaround times with my containers I split up the process into 3 steps:

- Export of the schema and data using a docker container

- On success copy the ora2pg project to the host

- Import the schema and data using another docker container

The ora2pg migration project is copied to the host machine allowing you to inspect the export and make adjustments if need be. Then you can copy it to the import container or simply bind mount the directory containing the ora2pg project.

The Dockerfile for the export image looks like this

FROM centos:7 # Prepare the system for ora2pg RUN yum install -y wget RUN wget https://yum.oracle.com/RPM-GPG-KEY-oracle-ol7 -O /etc/pki/rpm-gpg/RPM-GPG-KEY-oracle COPY ol7-temp.repo /etc/yum.repos.d/ RUN yum install -y oraclelinux-release-el7 RUN mv /etc/yum.repos.d/ol7-temp.repo /etc/yum.repos.d/ol7-temp.repo.disabled RUN yum install -y oracle-instantclient-release-el7 RUN yum install -y oracle-instantclient-basic RUN yum install -y oracle-instantclient-devel RUN yum install -y oracle-instantclient-sqlplus RUN yum install -y perl perl-CPAN perl-DBI perl-Time-HiRes perl-YAML perl-local-lib make gcc RUN yum install -y perl-App-cpanminus RUN cpanm CPAN::Config RUN cpanm CPAN::FirstTime ENV LD_LIBRARY_PATH=/usr/lib/oracle/21/client64/lib ENV ORACLE_HOME=/usr/lib/oracle/21/client64 RUN perl -MCPAN -e 'install DBD::Oracle' COPY ora2pg-21.1.tar.gz /tmp WORKDIR /tmp RUN tar zxf ora2pg-21.1.tar.gz && cd ora2pg-21.1 && perl Makefile.PL && make && make install RUN mkdir -p /naomi/migration RUN ora2pg --project_base /ora2pg --init_project my-migration WORKDIR /ora2pg COPY ora2pg.conf /ora2pg/my-migration/config/ CMD ora2pg -t SHOW_VERSION -c config/ora2pg.conf && ora2pg -t SHOW_TABLE -c config/ora2pg.conf\ && ora2pg -t SHOW_REPORT --estimate_cost -c config/ora2pg.conf\ && ./export_schema.sh && ora2pg -t INSERT -o data.sql -b ./data -c ./config/ora2pg.conf

Once the export looks good you can work on importing everything. The Dockerfile for the import image looks like this:

FROM centos:7 # Prepare the system for ora2pg RUN yum install -y wget RUN wget https://yum.oracle.com/RPM-GPG-KEY-oracle-ol7 -O /etc/pki/rpm-gpg/RPM-GPG-KEY-oracle COPY ol7-temp.repo /etc/yum.repos.d/ RUN yum install -y oraclelinux-release-el7 RUN mv /etc/yum.repos.d/ol7-temp.repo /etc/yum.repos.d/ol7-temp.repo.disabled RUN yum install -y oracle-instantclient-release-el7 RUN yum install -y oracle-instantclient-basic RUN yum install -y oracle-instantclient-devel RUN yum install -y oracle-instantclient-sqlplus RUN yum install -y postgresql-server RUN yum install -y perl perl-CPAN perl-DBI perl-Time-HiRes perl-YAML perl-local-lib make gcc RUN yum install -y perl-App-cpanminus RUN cpanm CPAN::Config RUN cpanm CPAN::FirstTime ENV LD_LIBRARY_PATH=/usr/lib/oracle/21/client64/lib ENV ORACLE_HOME=/usr/lib/oracle/21/client64 RUN perl -MCPAN -e 'install DBD::Oracle' COPY ora2pg-21.1.tar.gz /tmp WORKDIR /tmp RUN tar zxf ora2pg-21.1.tar.gz && cd ora2pg-21.1 && perl Makefile.PL && make && make install # you need to mount the project volume to /ora2pg WORKDIR /ora2pg CMD ./import_all.sh -d my_target_db -h $pg_host -U myuser -o myowner

Our target database runs on another host, so you need credentials to authenticate and perform all the required actions. Therefore we are the import container interactively. The PowerShell command for the import looks like this

docker run -it --rm -e pg_host=192.168.56.1 -v $PWD/ora2pg/my-migration:/ora2pg pgimport

The import script allows you to create the schema, sequences, indexes, constraints and load the data. I suggest adding the contraints after importing the data – a workflow supported by the import_all.sh script.

That way we got our Oracle database migrated into a PostgreSQL database. Unfortunately, this is only one part of the whole migration. The other part is making changes to the application code to correctly use the new database.