The concept described in this blog entry has evoked a lot of different metaphors and descriptions from our team when we discussed it. So don’t take my words or thoughts on it as the one true way to talk about it – the concept of the “testing gap” or the distance between the code under test and the test’s vantage point.

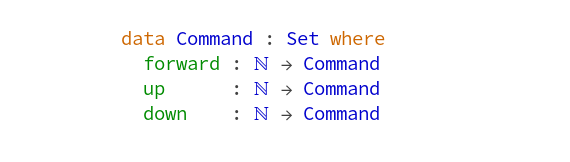

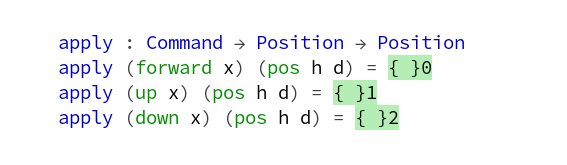

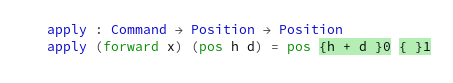

Before I describe my metaphor for it with some weird visuals, let’s look at some code:

public Budget(String denotation, int maximumHours) {

this.denotation = denotation;

this.maximumHours = maximumHours;

this.currentHours = maximumHours;

}

This is the constructor for an entity, a domain class that represents a budget of work hours that gets slowly used up when you work for the customer’s project. There is not much going on in this code except one little detail of the domain: New budgets always start fully “filled up”, in that the currentHours are set to the maximumHours. You can’t create a budget that is already half empty with this code.

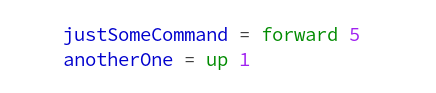

Such a domain concept or “business rule” requires a test that ensures it is still in place:

@Test

public void has_initially_current_hours_set_to_maximum() {

Budget target = new Budget(

"current is maxed",

100

);

assertThat(target.getMaximumHours()).isEqualTo(100);

assertThat(target.getCurrentHours()).isEqualTo(100);

}

This is a fairly boring unit test that ensures that freshly created budgets have all their working hours still available.

In our example, the entity lives in the core of a web application that provides an endpoint to create new budgets. We have a test for the endpoint, of course:

@Test

public void stores_new_budget() throws Exception {

this.web.perform(

post("/budgets")

.contentType(MediaType.APPLICATION_JSON)

.content("{\"denotation\": \"new budget\", \"maximumHours\": 300}")

)

.andExpect(status().isOk())

.andExpect(content().json("{\"denotation\": \"new budget\", \"maximumHours\": 300, \"currentHours\": 300}"));

}

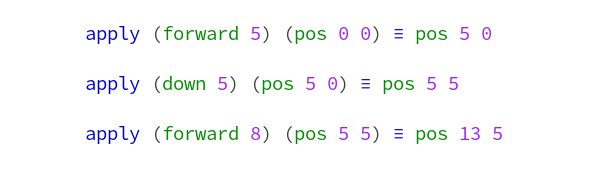

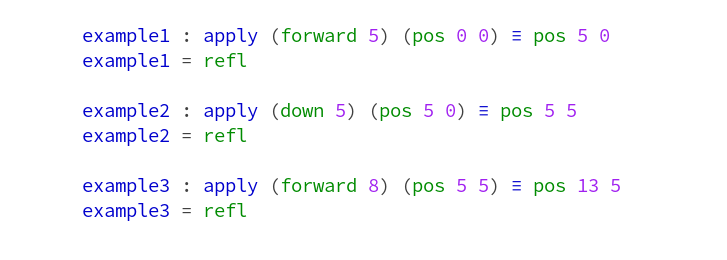

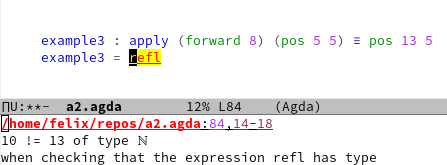

You can shudder at the code formatting or the necessity to escape your JSON data into inscrutability. At least the second problem more or less disappears with current Java versions. But that’s not the point today. The point is that this is effectively the same test as above, but with a gap in between.

If you wrote just the second test, your code coverage metrics would probably not decrease. Your business rule would still be tested. So why write the first test if it adds nothing to the safety net?

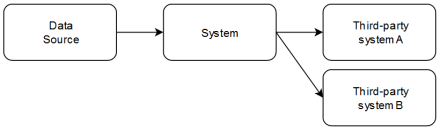

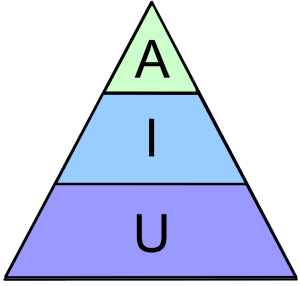

This can be explained with the idea that there is a considerable “testing gap” between the second test and the business rule. It covers the entity’s constructor code and states explicitely that the currentHours property should be set to the same value as the maximumHours property. But it also defines the communication protocol as being HTTP, the data format as being JSON and travels through code that finds an “endpoint” for the given URL, maps the given JSON to a constructor call and serializes the resulting object as JSON back to the requester. That would be a lot of padding just to test the constructor’s third line.

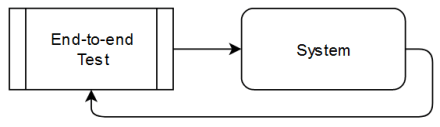

The first test has virtually no testing gap. It knows nothing about the web, data formats or whatever else the application consists of. It just looks at the entity and its behaviour in isolation.

There are perfectly valid reasons to write the second test, but it should not be the only test that ensures the business rule in our example. The second test “sees too much” from its vantage point to pay attention to a little detail like the business rule.

In case you didn’t quite get the concept of the testing gap yet, here is how I imagine it in my head: If your code under test is a mystery box (really try to picture a shoebox made of cardboard that rattles when you move it), then your test is a big floating eye that uses little cracks and holes in the box to get a quick peek inside. If you exhibit state by getter methods like in our example, the eye ensures the internal state of the box by looking at the gauges that are placed on its sides.

If your testing gap is small, the eye hovers up close to the box. It doesn’t see anything else, but it notices every detail of the box.

If you have some testing gap in your test code, the eye is placed in a considerate distance from the mystery box. There are other important things between them. The gauges aren’t directly readable. The eye uses indirect clues and reflections to gather its informations. Every time something in the gap’s setup changes, the testing eye needs to adjust its gaze.

Which brings us to the conclusion of this metaphor: If you want things to be looked at in detail, write tests without a testing gap. Otherwise, your tests will have increased execution times, exhibit a strange imprecision in their message (“something in these dozen of classes has changed and it might not even be relevant”) and require frequent adjustments that are not related to their testing story.

Or, if said with the words of my imagination, place your testing eye directly at the entrance of your test’s hideout.

You’ve probably thought about this concept already, in your own terms and metaphors. Can you try to describe it in a comment? Just for the name, we discussed “testing distance”, “testing height”, “testing gap” and others. Perhaps we like your description even better.