In a Java Micronaut application, endpoints are often secured using @Secured(SecurityRule.IS_AUTHENTICATED), along with an authentication provider. In this case, authentication takes place using API keys, and the authentication provider validates them. If you also provide Swagger documentation for users to test API functionalities quickly, you need a way for users to specify an API key in Swagger that is automatically included in the request headers.

For a general guide on setting up a Micronaut application with OpenAPI Swagger and Swagger UI, refer to this article.

The following article focuses on how to integrate API key authentication into Swagger so that users can authenticate and test secured endpoints directly within the Swagger UI.

Accessing Swagger Without Authentication

To ensure that Swagger is always accessible without authentication, update the application.yml file with the following settings:

micronaut:

security:

intercept-url-map:

- pattern: /swagger/**

access:

- isAnonymous()

- pattern: /swagger-ui/**

access:

- isAnonymous()

enabled: true

These settings ensure that Swagger remains accessible without requiring authentication while keeping API security enabled.

Defining the Security Schema

Micronaut supports various Swagger annotations to configure OpenAPI security. To enable API key authentication, use the @SecurityScheme annotation:

import io.swagger.v3.oas.annotations.security.SecurityScheme;

import io.swagger.v3.oas.annotations.enums.SecuritySchemeIn;

import io.swagger.v3.oas.annotations.enums.SecuritySchemeType;

@SecurityScheme(

name = "MyApiKey",

type = SecuritySchemeType.APIKEY,

in = SecuritySchemeIn.HEADER,

paramName = "Authorization",

description = "API Key authentication"

)

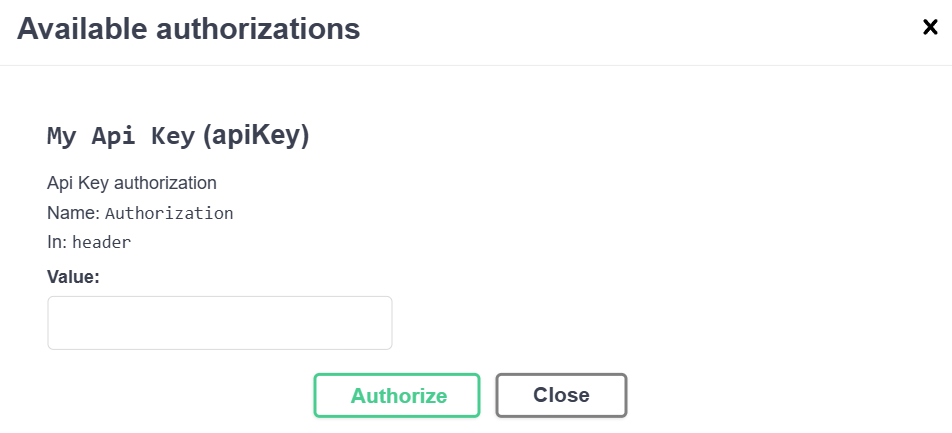

This defines an API key security scheme with the following properties:

- Name:

MyApiKey - Type:

APIKEY - Location: Header (

Authorizationfield) - Description: Explains how the API key authentication works

Applying the Security Scheme to OpenAPI

Next, we configure Swagger to use this authentication scheme by adding it to @OpenAPIDefinition:

import io.swagger.v3.oas.annotations.info.*;

import io.swagger.v3.oas.annotations.security.SecurityRequirement;

@OpenAPIDefinition(

info = @Info(

title = "API",

version = "1.0.0",

description = "This is a well-documented API"

),

security = @SecurityRequirement(name = "MyApiKey")

)

This ensures that the Swagger UI recognizes and applies the defined authentication method.

Conclusion

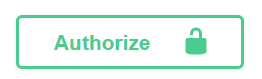

With these settings, your Swagger UI will display an Authorization field in the top-left corner.

Users can enter an API key, which will be automatically included in all API requests as a header.

This is just one way to implement authentication. The @SecurityScheme annotation also supports more advanced authentication flows like OAuth2, allowing seamless token-based authentication through a token provider.

By setting up API key authentication correctly, you enhance both the security and usability of your API documentation.