At the last dev brunch, I got the recommendation about a talk that tries to explain functional programming differently. What really got me was the effectiveness of the changed vocabulatory. I’ve seen this before, in the old talk about test driven development and behaviour driven development. But in my head, I think about unit tests with another overarching metaphor that I’m trying to explain in this blog post series:

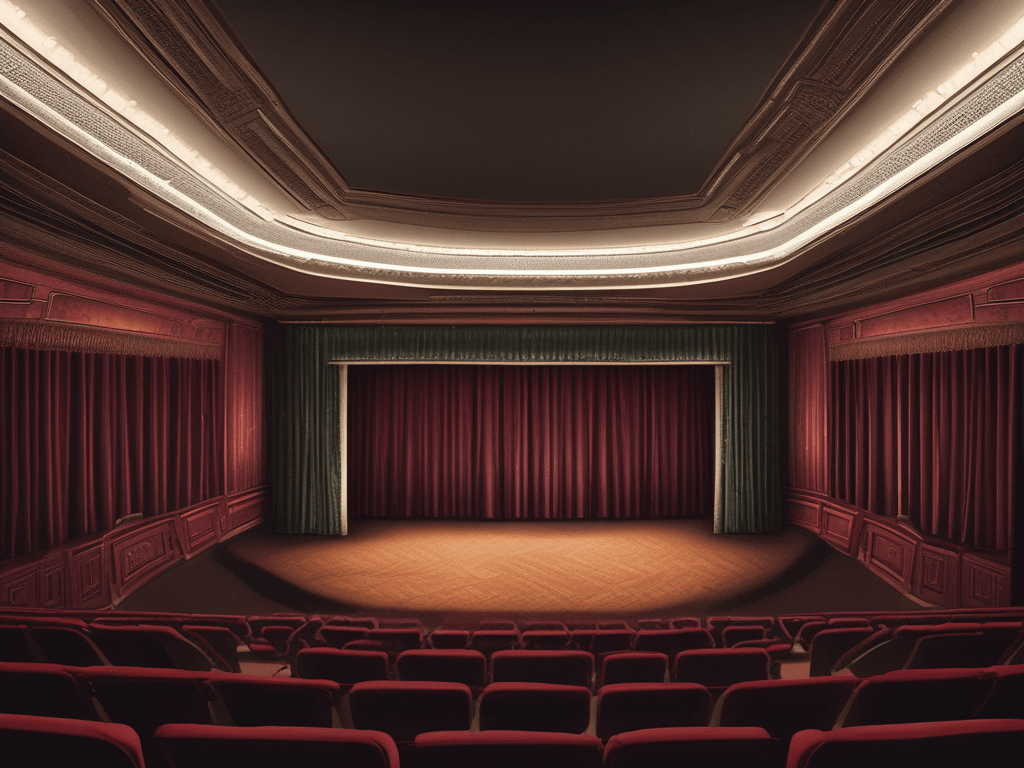

Every unit test is a stage play that tells a short story about your system.

And this metaphor really guides my approach to nearly any aspect of unit testing, from each single line of code to the whole concept of test coverage. So I’m breaking my explanation into (at least) five parts and focus on one aspect in each part.

Today, we look at the actors.

In each classic play, there are well-known roles that can be played by nearly any human, but always stay the same role. There’s the hero, the (comedic) sidekick and of course, the villain or antagonist. In every show of Romeo and Juliet, there will be a Romeo. It might not be the most convincing Romeo ever, but the role stays the same, no matter the cast.

The same thing is true for every well-formed unit test. There are four roles that always appear on stage:

- target: This is the object under test or the code under test if you don’t use objects. The target is probably different for every unit test you write, but the role is always present. I’ve seen it being called “cut” for “code under test”, but I prefer “target”. If you see a reference named “target” in my test code, you can be sure about the role it plays in the story.

- actual: If you can design your code to adhere to the simple “parameters in, result out” call pattern, the “result out” is the “actual”. It is what your target produced when challenged by the specific parameters of your test. One trick to testable code is to design the “actual” role as being quite simple and “flat”.

- expected: This might be the closest thing to an antagonist in your play. The “expected” role is filled with the value (or values) that your “actual” is measured against. If your “actual” is simple, your “expected” will be simple, too. If your “actual” is too complex, the “expected” role will be overbearing. In any case, the “expected” role is what drives your assertions.

- given: Our hero, the “target”, is often dependent on entry parameters or secondary objects (mocked or not). These sidekicks are part of the “given” role. You might think about the “given-when-then” storytelling structure of behaviour driven design for the name. If you strive for a simple code structure, the required “given” in your unit test should be manageable.

As you can see, the story of a typical unit test is always the same: The target, with the help of the given, produces an actual that competes against the expected.

If this story has a happy end, your test runs green. If the actual fails the expectation, your test runs red. If the target fails to produce an actual at all (think about an exception or error), your whole play falls apart and the test runs red.

Enough theory, let’s look at an unit test that uses the four roles:

@Test

public void rounds_up_to_the_next_decimal_power() {

final Configuration given = new Configuration(

StringVirtualFile.createFileFromContent(

"report.config",

"scale.maximum=2E5"

)

);

final ReportConfiguration target = new ReportConfiguration(

given

SuffixProvider.none

);

final Optional<Double> actual = target.scaleMaximum();

final double expected = 1E6;

assertThat(actual).contains(expected);

}

I’ve highlighted the roles for better visibility. Note that for a role to appear in the play, it doesn’t really have to be named explicitely. Most of the time, the last two lines would be collated into one:

assertThat(actual).contains(1E6);

You can still see the “expected” role play its part, but not as prominent as before.

You also probably saw the extra “given” that wasn’t highlighted:

SuffixProvider.none

It might be relevant to the story or really be an uncredited extra that is not crucial in the target’s journey to produce the correct actual. If that’s the case, it seems appropriate not to name it. We will learn about techniques that I use to make these extras more nondescript in a later part. Right now, we can differentiate between main roles that are named and secondary roles that are just there, as part of the scenery. Just don’t fool your audience by having an unnamed actor contribute an important piece to the story’s success. That might be a cool plot twist, but I’m not here to be surprised.

Let your tests perform boring plays, but lots of them.

By using the four roles of test play, you make it clear to the reader (your real audience) what to expect from your test code parts. Don’t name irrelevant test code parts and only omit the role names if there are no extras on stage.

Your audience will still find your play boring (that’s the fate of nearly all test code), but it won’t feel disregarded or, even worse, deceived.

Epilogue

This is the first part of a series. All parts are linked below: